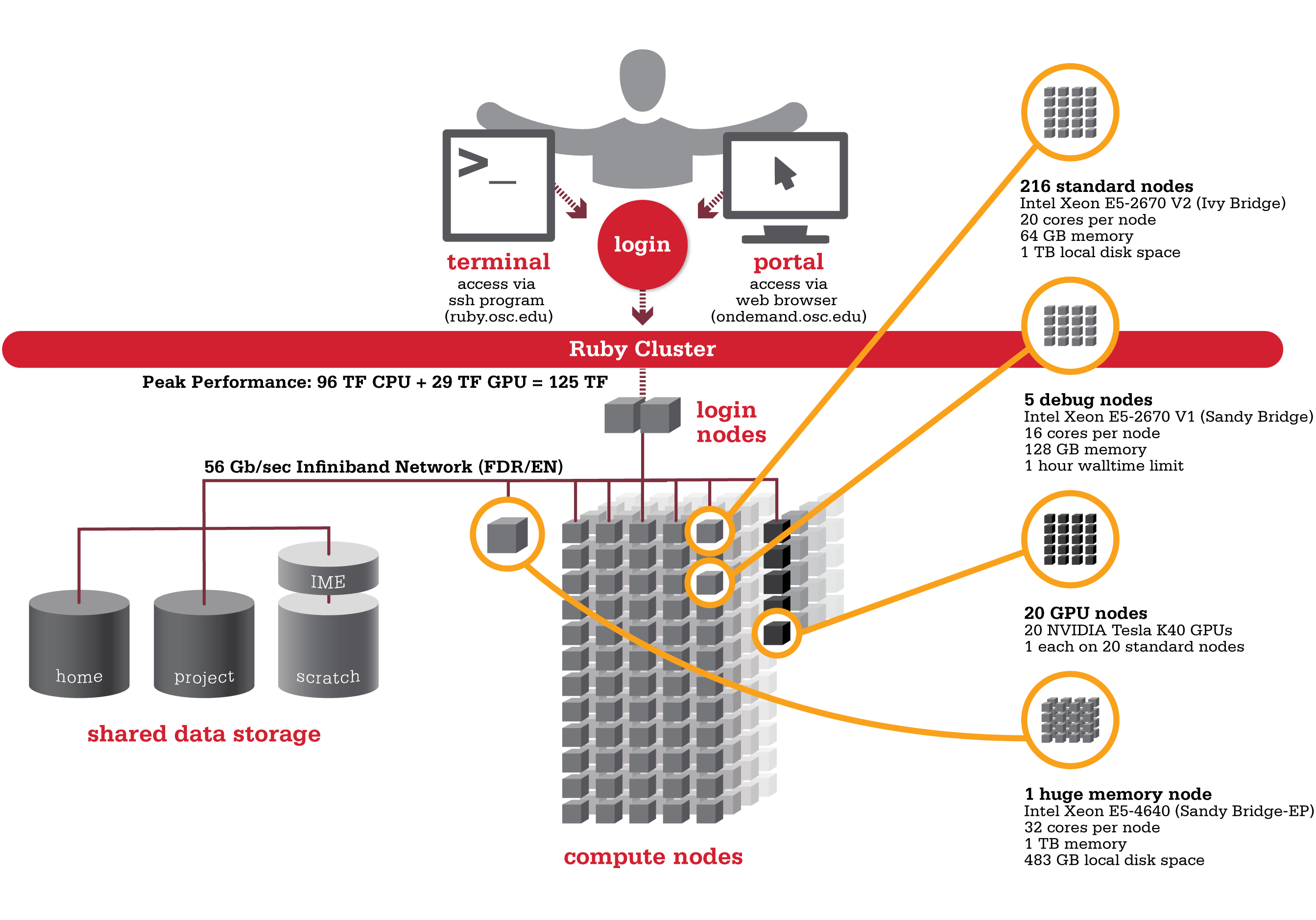

Ruby

Ruby was named after the Ohio native actress Ruby Dee. An HP built, Intel® Xeon® processor-based supercomputer, Ruby provided almost the same amount of total computing power (~125 TF, used to be ~144 TF with Intel® Xeon® Phi coprocessors) as our former flagship system Oakley on less than half the number of nodes (240 nodes). Ruby had has 20 nodes are outfitted with NVIDIA® Tesla K40 accelerators (Ruby used to feature two distinct sets of hardware accelerators; 20 nodes were outfitted with NVIDIA® Tesla K40 and another 20 nodes feature Intel® Xeon® Phi coprocessors).

Hardware

Hardware

Detailed system specifications:

- 4800 total cores

- 20 cores/node & 64 gigabytes of memory/node

- Intel Xeon E5 2670 V2 (Ivy Bridge) CPUs

- HP SL250 Nodes

20 Intel Xeon Phi 5110p coprocessors(remove from service on 10/13/2016)- 20 NVIDIA Tesla K40 GPUs

- 2 NVIDIA Tesla K80 GPUs

- Both equipped on single "debug" queue node

- 850 GB of local disk space in '/tmp'

- FDR IB Interconnect

- Low latency

- High throughput

- High quality-of-service.

- Theoretical system peak performance

- 96 teraflops

- NVIDIA GPU performance

- 28.6 additional teraflops

Intel Xeon Phi performance20 additional teraflops

- Total peak performance

- ~125 teraflops

Ruby has one huge memory node.

- 32 cores (Intel Xeon E5 4640 CPUs)

- 1 TB of memory

- 483 GB of local disk space in '/tmp'

Ruby is configured with two login nodes.

- Intel Xeon E5-2670 (Sandy Bridge) CPUs

- 16 cores/node & 128 gigabytes of memory/node

How to Connect

-

SSH Method

To login to Ruby at OSC, ssh to the following hostname:

ruby.osc.edu

You can either use an ssh client application or execute ssh on the command line in a terminal window as follows:

ssh <username>@ruby.osc.edu

You may see warning message including SSH key fingerprint. Verify that the fingerprint in the message matches one of the SSH key fingerprint listed here, then type yes.

From there, you are connected to Ruby login node and have access to the compilers and other software development tools. You can run programs interactively or through batch requests. We use control groups on login nodes to keep the login nodes stable. Please use batch jobs for any compute-intensive or memory-intensive work. See the following sections for details.

-

OnDemand Method

You can also login to Ruby at OSC with our OnDemand tool. The first is step is to login to OnDemand. Then once logged in you can access Ruby by clicking on "Clusters", and then selecting ">_Ruby Shell Access".

Instructions on how to connect to OnDemand can be found at the OnDemand documentation page.

File Systems

Ruby accesses the same OSC mass storage environment as our other clusters. Therefore, users have the same home directory as on the Oakley Cluster. Full details of the storage environment are available in our storage environment guide.

Software Environment

The module system on Ruby is the same as on the Oakley system. Use module load <package> to add a software package to your environment. Use module list to see what modules are currently loaded and module avail to see the module that are available to load. To search for modules that may not be visible due to dependencies or conflicts, use module spider . By default, you will have the batch scheduling software modules, the Intel compiler and an appropriate version of mvapich2 loaded.

You can keep up to on the software packages that have been made available on Ruby by viewing the Software by System page and selecting the Ruby system.

Understanding the Xeon Phi

Guidance on what the Phis are, how they can be utilized, and other general information can be found on our Ruby Phi FAQ.

Compiling for the Xeon Phis

For information on compiling for and running software on our Phi coprocessors, see our Phi Compiling Guide.

Batch Specifics

qsub to provide more information to clients about the job they just submitted, including both informational (NOTE) and ERROR messages. To better understand these messages, please visit the messages from qsub page.Refer to the documentation for our batch environment to understand how to use PBS on OSC hardware. Some specifics you will need to know to create well-formed batch scripts:

- Compute nodes on Ruby have 20 cores/processors per node (ppn).

- If you need more than 64 GB of RAM per node you may run on Ruby's huge memory node ("hugemem"). This node has four Intel Xeon E5-4640 CPUs (8 cores/CPU) for a total of 32 cores. The node also has 1TB of RAM. You can schedule this node by adding the following directive to your batch script:

#PBS -l nodes=1:ppn=32. This node is only for serial jobs, and can only have one job running on it at a time, so you must request the entire node to be scheduled on it. In addition, there is a walltime limit of 48 hours for jobs on this node. - 20 nodes on Ruby are equipped with a single NVIDIA Tesla K40 GPUs. These nodes can be requested by adding

gpus=1to your nodes request, like so:#PBS -l nodes=1:ppn=20:gpus=1.- By default a GPU is set to the Exclusive Process and Thread compute mode at the beginning of each job. To request the GPU be set to Default compute mode, add

defaultto your nodes request, like so:#PBS -l nodes=1:ppn=20:gpus=1:default.

- By default a GPU is set to the Exclusive Process and Thread compute mode at the beginning of each job. To request the GPU be set to Default compute mode, add

- Ruby has 4 debug nodes (2 non-GPU nodes, as well as 2 GPU nodes, with 2 GPUs per node), which are specifically configured for short (< 1 hour) debugging type work. These nodes have a walltime limit of 1 hour. These nodes are equipped with E5-2670 V1 CPUs with 16 cores per a node.

- To schedule a non-GPU debug node:

#PBS -l nodes=1:ppn=16 -q debug

- To schedule a GPU debug node:

#PBS -l nodes=1:ppn=16:gpus=2 -q debug

- To schedule a non-GPU debug node:

Using OSC Resources

For more information about how to use OSC resources, please see our guide on batch processing at OSC. For specific information about modules and file storage, please see the Batch Execution Environment page.