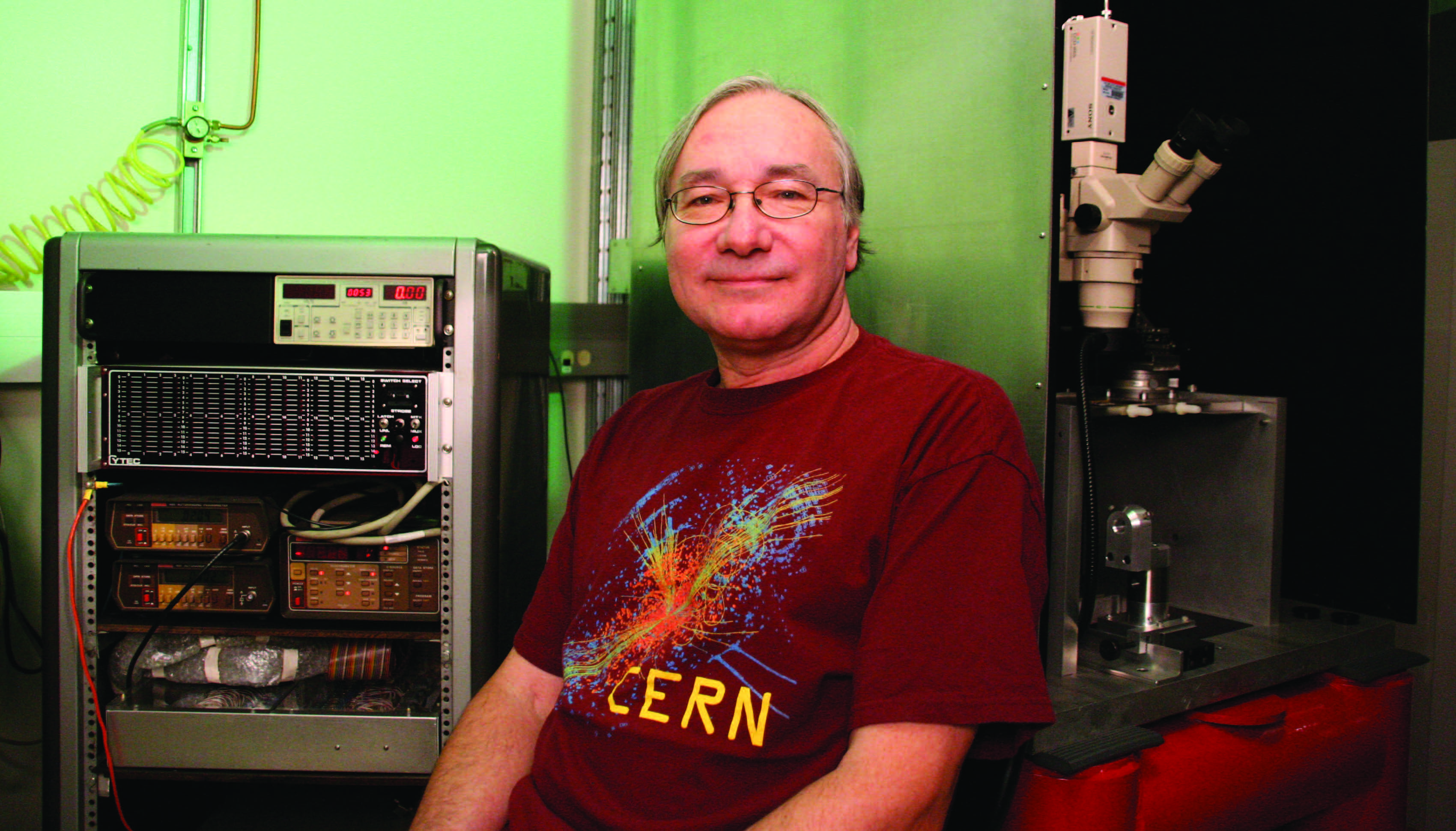

Physicists such as Ohio State’s Thomas Humanic are studying the immense data files created by the Large Hadron Collider and stored at the Ohio Supercomputer Center and other locations to answer fundamental questions about the basic building blocks of the universe.

In April, physicists working on the ALICE project (short for A Large Ion Collider Experiment) began recording data from collisions within the Large Hadron Collider, operated by the European Laboratory for Nuclear Research (CERN) near Geneva, Switzerland.

More than 1,000 international physicists, engineers and technicians working on the project hope to find answers to fundamental questions about the birth of the universe, matter vs. antimatter, the nature of dark matter and maybe even the existence of other dimensions.

The ALICE scientists carefully propel and collide opposing beams of protons and beams of lead nuclei at nearly the speed of light around a 17-mile underground loop. These are the highest energy proton collisions ever produced in the laboratory – 3.5 times higher thanthe previous highest-energy proton collisions created at the Department of Energy’s Fermilab.

The ALICE collisions expel hundreds to thousands of small particles, including quarks – which make up the protons and neutrons of the atomic nuclei – and gluons – which bind the quarks together. For a fraction of a second, these particles form a fiery-hot plasma that hasn’t existed since the first moments after the Big Bang, about 14 billion years ago. Within the massive 52-foot ALICE detector, 18 sensitive sub-detectors measure the behavior of the expelled particles, recording up to approximately 1.25 gigabytes of data per second – six times the contents of Encyclopedia Britannica every second.

The massive data sets are now being collected and distributed to researchers around the world through high-speed connections to the LHC Computing Grid (LCG), a network of computer clusters at scientific institutions, including the Ohio Supercomputer Center. The LCG is composed of more than 130 organizations across 34 countries and is organized into four levels, or "tiers." Tier 0 is CERN’s central computer, which distributes data to the eleven Tier 1 sites around the world.

The Tier 1 sites, in turn, send data to Tier 2 sites, which provide storage and computational analysis. Tier 3 sites involve individual computers operated at research facilities.

“Traditionally, researchers would do much, if not all, of their computing at one central computing center. This cannot be done with the ALICE experiments because of the large data volumes,” said Thomas J. Humanic, Ph.D., a professor of physics at The Ohio State University working on several experiments at the LHC. “OSC has been contributing computing resources to the project from the very beginning of ALICE’s distributed computing efforts, starting in 2000.”

Construction of the LHC began in 1995, when much of the necessary computational and networking technologies didn’t yet exist. New technologies, such as grid computing, were developed to meet the demands of the project. OSC was one of the first adopters of the ALICE-developed AliEn (ALICE Environment) grid infrastructure. As a Tier-2 site on the LCG, OSC this year has committed, through its normal allocations process, 30 terabytes of data storage and one million processor hours, according to Doug Johnson, a senior systems developer at OSC.

“This data will be accessed by Dr. Humanic and his OSU colleagues, as well as researchers anywhere in the world, for downloading, reconstruction and analysis,” Johnson said. “Researchers can sit at their laptops, write small programs or macros, submit the programs through the AliEn system, find the necessary ALICE data on AliEn servers and then run their jobs through centers such as OSC.”

Beyond serving as a storage and analysis resource for researchers working on the project, “OSC also has been critical in the development and testing of a computing model to analyze the ALICE data,” Humanic said. OSC had provided 300,000 CPU hours for data simulations prior to the actual LHC experiments.

--

Project lead: Thomas J. Humanic, The Ohio State University

Research title: Use of OSC computing resources by the Ohio State

University Heavy Ion Group in support of ALICE computing program

Funding source: National Science Foundation