Recorded on March 15, 2023.

Client Resources

Getting Started

What can OSC do for you? OSC's high performance computing, secure data storage and technical expertise can help advance research, accelerate business innovation and support classroom instruction.

Our comprehensive services guide provides an overview of our resources and how you can use them.

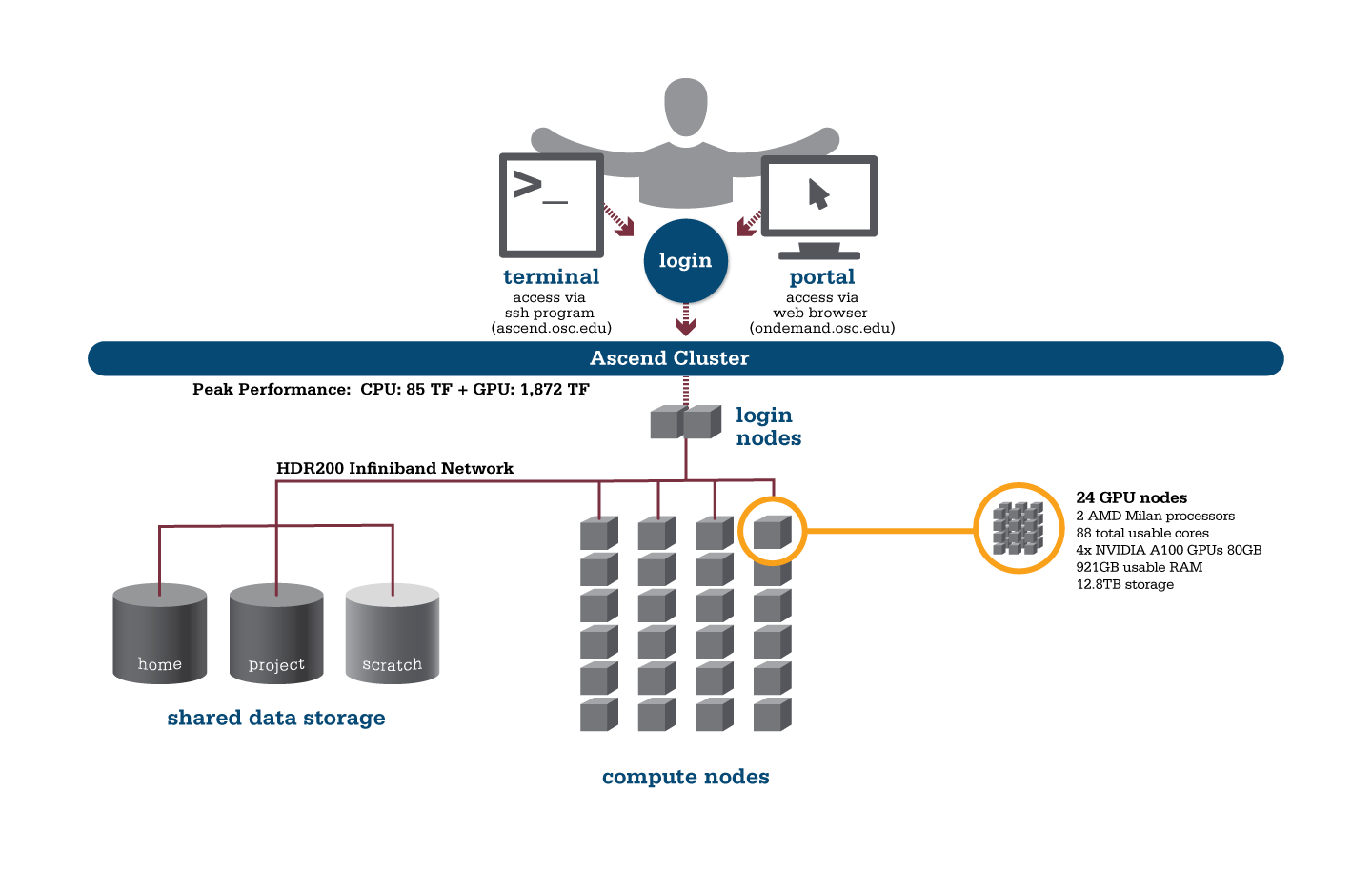

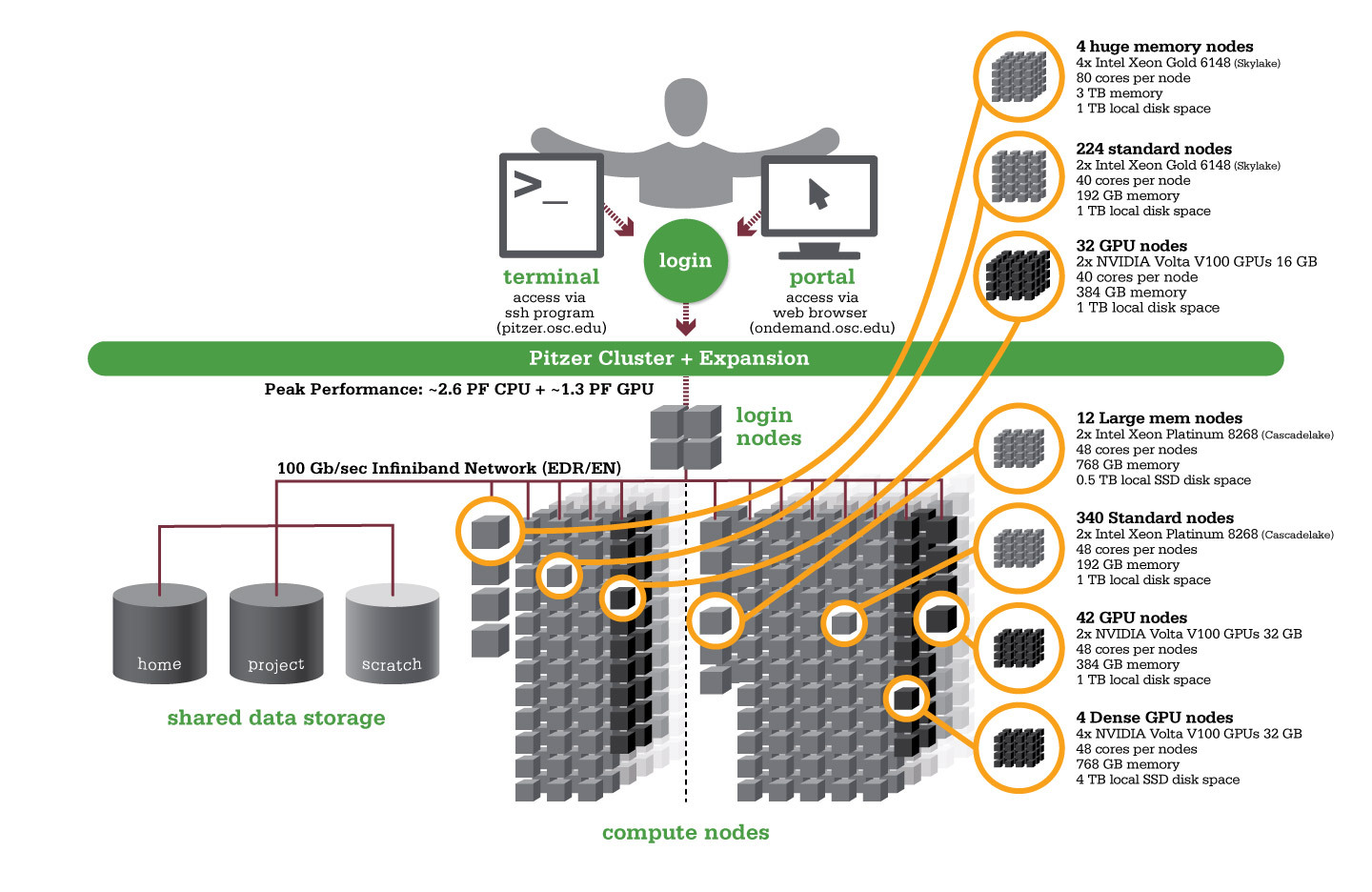

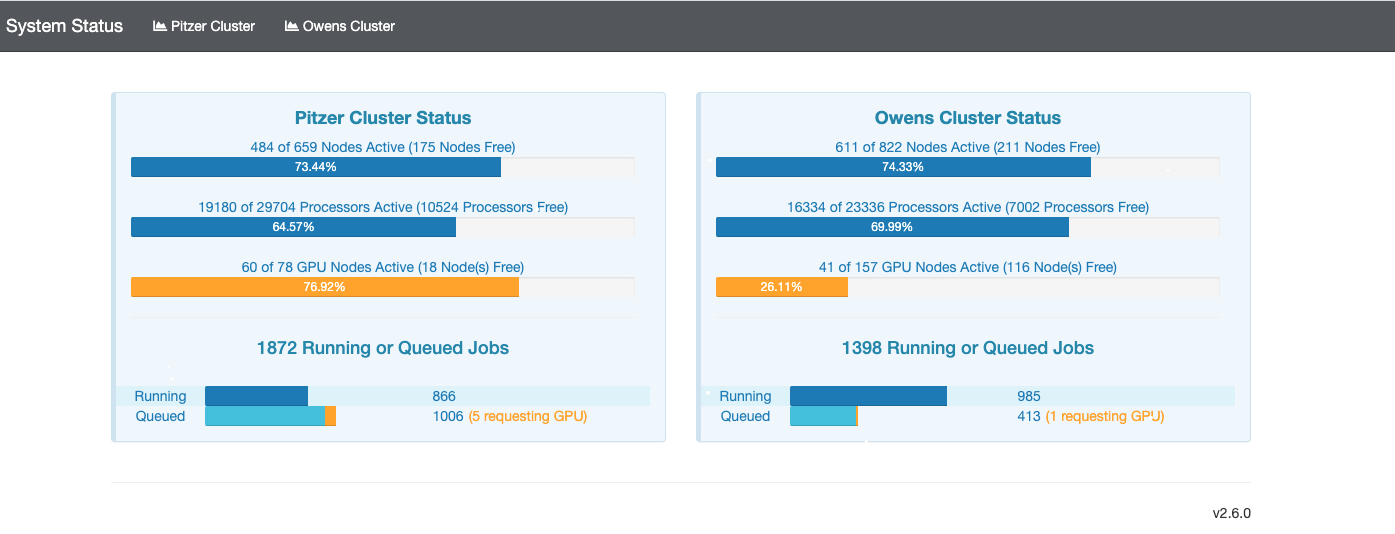

- Cluster computing: OSC offers three supercomputer clusters – Pitzer, Owens and Ascend – that all support GPU computing.

- Research data storage: Clients can make use of work-area and supplemental storage during projects as well as long-term storage of data. Transfer files through our OnDemand platform or Globus subscription.

- Software: We provide a variety of software applications to support all aspects of scientific research. Ohio researchers may access licenses for some software packages through our statewide software program.

- Research software engineering: Our staff members can provide expert consultation on topics such as computing languages, programming models, numerical libraries and development tools for parallel/threaded computing and data analysis.

- Data analytics and machine learning: Our hardware and software offerings can accommodate the intensive workloads of data analytics and machine learning work.

- Dependability: The State of Ohio Computer Center, home of our computing clusters, provides security, climate control and fully redundant systems designed to keep OSC online at all times.

- Education: Faculty and students can learn about high performance computing through our webinars, workshops and how-to guides. Classroom accounts are available to instructors seeking to incorporate HPC work into courses.

How does the academic community use OSC?

Faculty at Ohio higher education institutions use OSC to conduct original research in fields ranging from engineering and medicine to plant biology and political science. Our extensive collection of case studies shows the breadth of research work underway, how graduate and undergraduate students are gaining critical HPC experience, and how academic clients make use of the wide variety of services and expert support that OSC provides.

How does the commercial and nonprofit community use OSC?

Commercial and nonprofit clients across the United States use OSC for research, simulations, development and testing of products. Our extensive collection of case studies offers examples of this work, including clients involved with pharmaceutical drug development, the simulation of how fluid dynamics impact vehicle performance, the study of factors impacting oil and gas pipeline corrosion, and the advancement of weather-forecasting technology.

What does it cost to use OSC?

As an academic computing resource for the State of Ohio, OSC is always free for Ohio classroom usage, and academic researchers in Ohio qualify for credits that largely or completely offset fees. Commercial and nonprofit clients purchase services at set rates. Find more details about our cost structure.

What training or client support does OSC offer?

OSC provides a variety of training and support options for clients:

- Watch our prerecorded new user training

- Sign up for a live “Intro to Supercomputing at OSC” webinar

- Read our new user guide

- Sign up for a 1-on-1 virtual consultation

- Visit our training page to view a full list of opportunities

Ready to take the next step?

Interested in arranging a presentation for your group about OSC’s resources and services? Please contact us at oschelp@osc.edu.

Ready to get started now?

Request a new login ID for an existing project

New User Resource Guide

Getting Started at OSC

This guide was created for new users of OSC.

It explains how to use OSC from the very beginning of the process, from creating an account right up to using resources at OSC.

OSC account setup

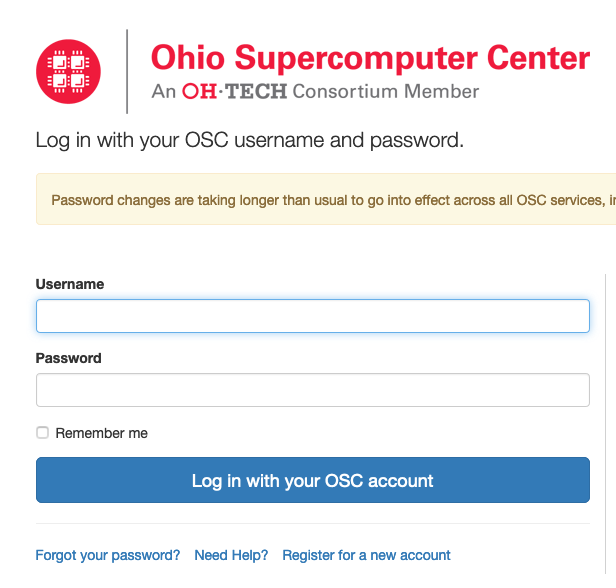

The first step is to make sure that you have an OSC username.

There are multiple ways to start this process.

You can sign up at MyOSC or be invited to use OSC via email.

Make sure to select the PI checkbox if you are a PI at your institution and want to start your own project at OSC.

Contact OSC Help with questions.

Email notifications from OSC

As soon as you register for an account with OSC, you will start receiving automated emails from MyOSC. These include password expiration emails, access to project(s), etc. These are sent from "no-reply@osc.edu." All folders should be checked, including spam/junk. If you did not receive this email, please contact OSC Help.

OSC will also add you to our mailing list within a month of your account being opened. Emails will be sent from oschelp@osc.edu for system notices, monthly newsletters, event updates, etc. This information can also be found on our events page and known issues page.

Finally, we may notify clients through ServiceNow, our internal ticketing and monitoring system. These notices will come from the OH-TECH Service Desk, support@oh-tech.org.

Project and user management

Creating a project

Only users with PI status are able to create a project. See how to request PI status in manage profile information. Follow the instructions in creating projects and budgets to create a new project.

Adding new or existing users to a project

Once a project is created, the PI should add themselves to it and any others that they want to permit to use OSC resources under their project.

Refer to adding/inviting users to a project for details on how to do this.

Reuse an existing project

If there was already a project that you would like to reuse, follow the same instructions as found in creating projects and budget, but skip to the budget creation section.

Costs of OSC resources

If there are questions about the cost, refer to service costs.

Generally, an Ohio academic PI can create a budget for $1,000 on a project and use the annual $1,000 credit offered to Ohio academic PIs. Review service cost terms for explanations of budgets and credits at OSC.

See the complete MyOSC documentation in our Client Portal here. The OSCusage command can also provide useful details.

Classroom project support

OSC supports classrooms by making it simpler for students to use OSC resources through a customizable OnDemand interface at class.osc.edu

Visit the OSC classroom resource guide and contact oschelp@osc.edu if you want to discuss the options there.

There will be no charges for classroom projects.

Transfer files to/from OSC systems

There are a few options for transferring files between OSC and other systems.

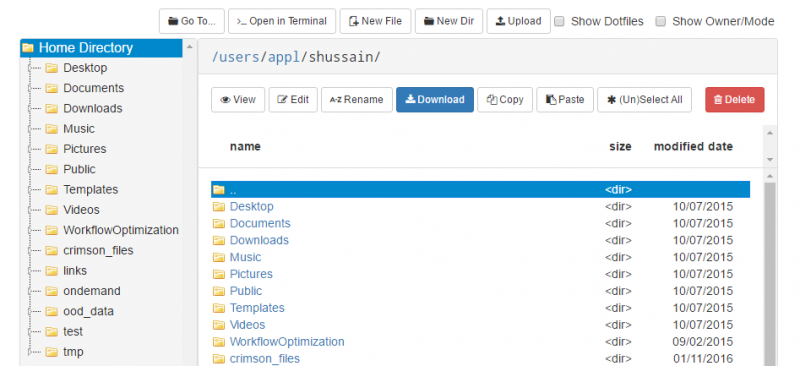

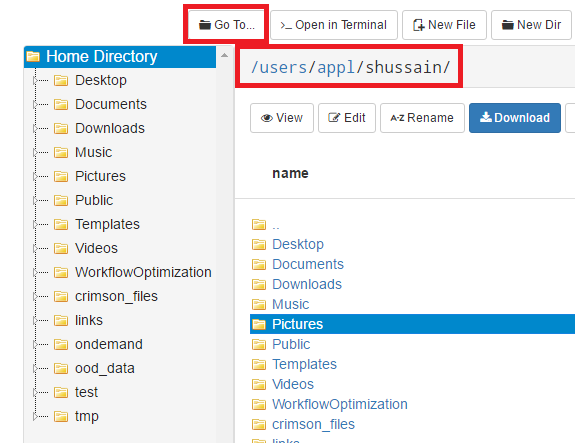

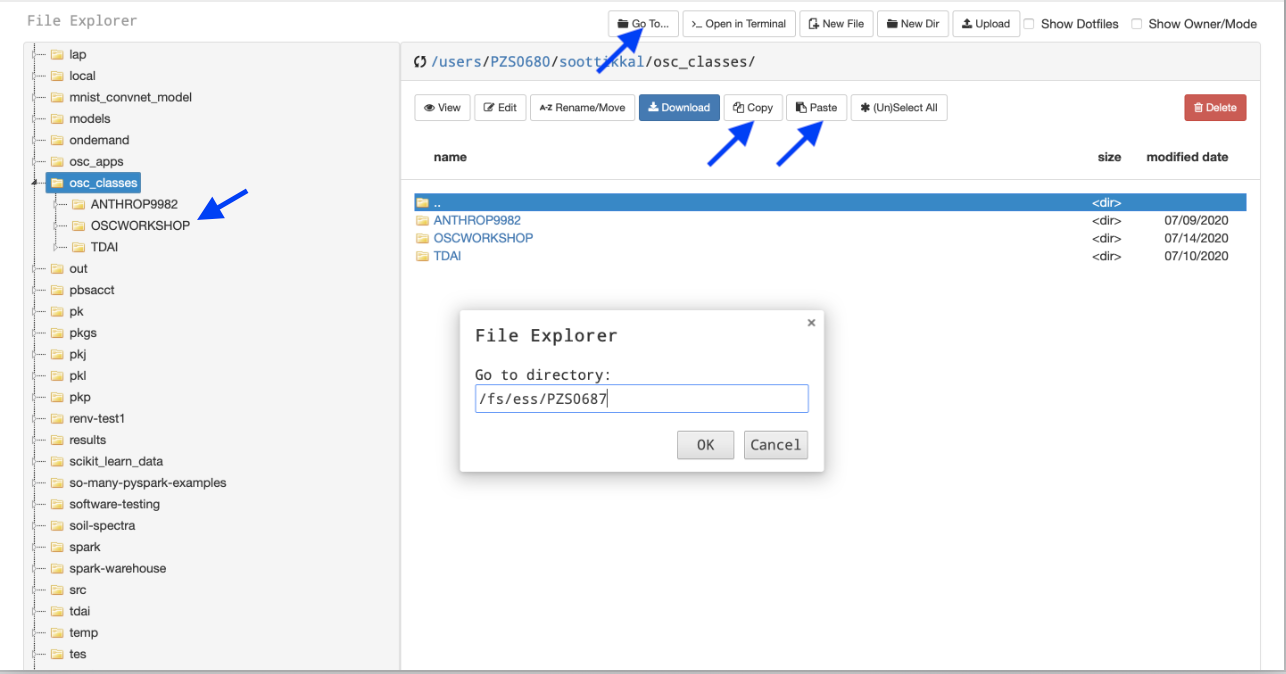

OnDemand file explorer

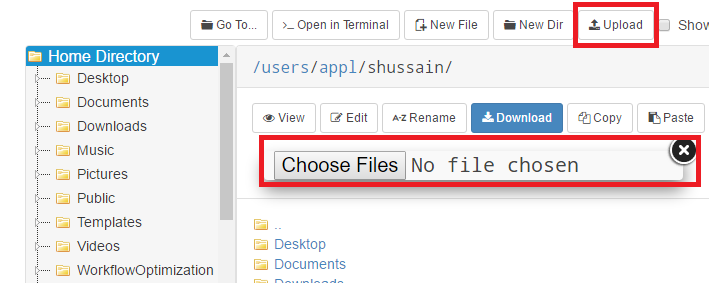

Using the OnDemand file explorer is the quickest option to get started. Just log into ondemand.osc.edu and click "File Explorer" from the navigation bar at the top of the page. From there you can upload/download files and directories.

This is a simple option, but for files or directories that are very large, it may not be best. See other options below in this case.

SFTP client software

Local software can be used to connect to OSC for downloading and uploading files.

There are quite a few options for this, and OSC does not have a preference for which one you use.

The general guidance for all of them is to connect to host sftp.osc.edu using port 22.

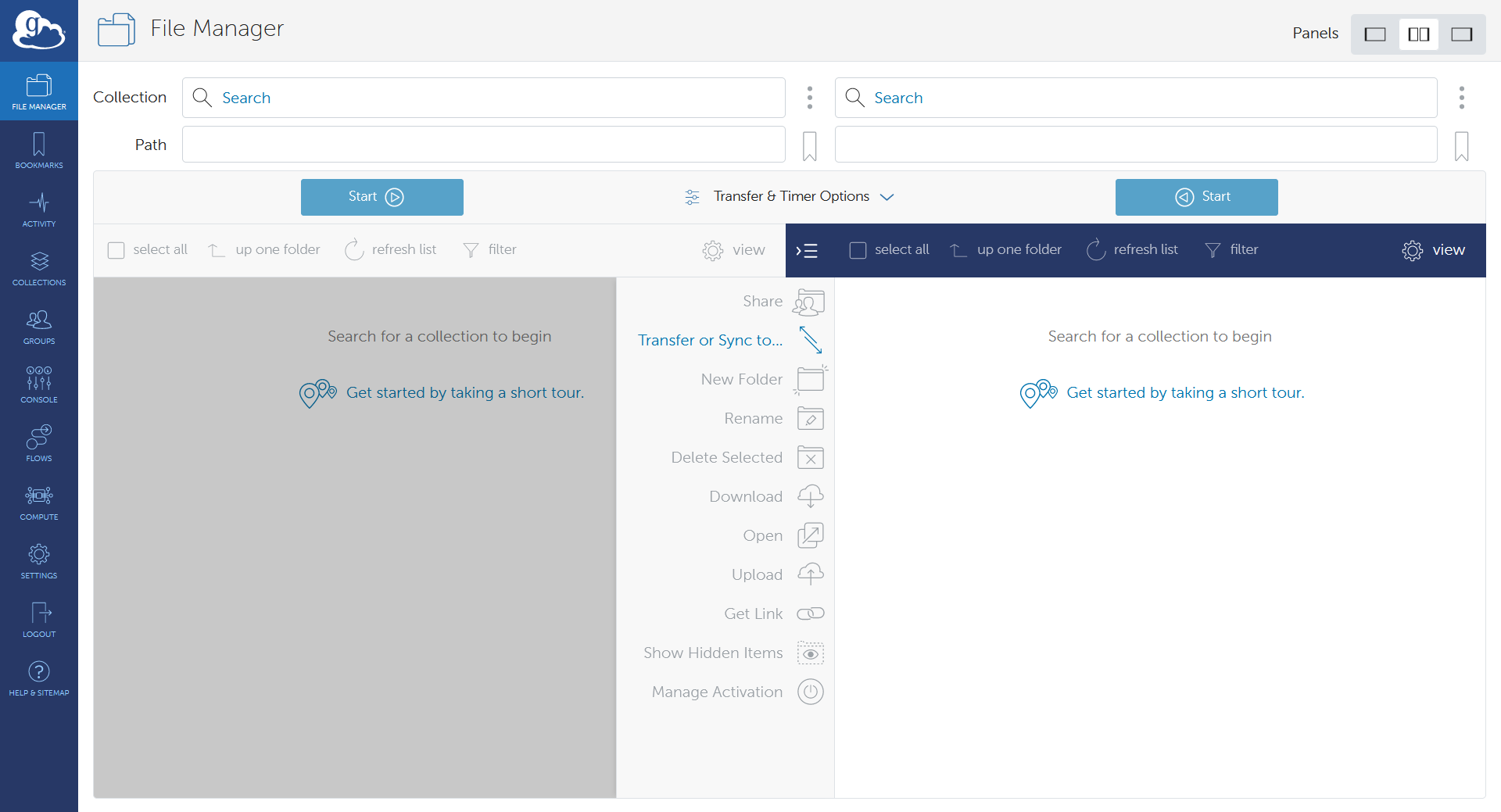

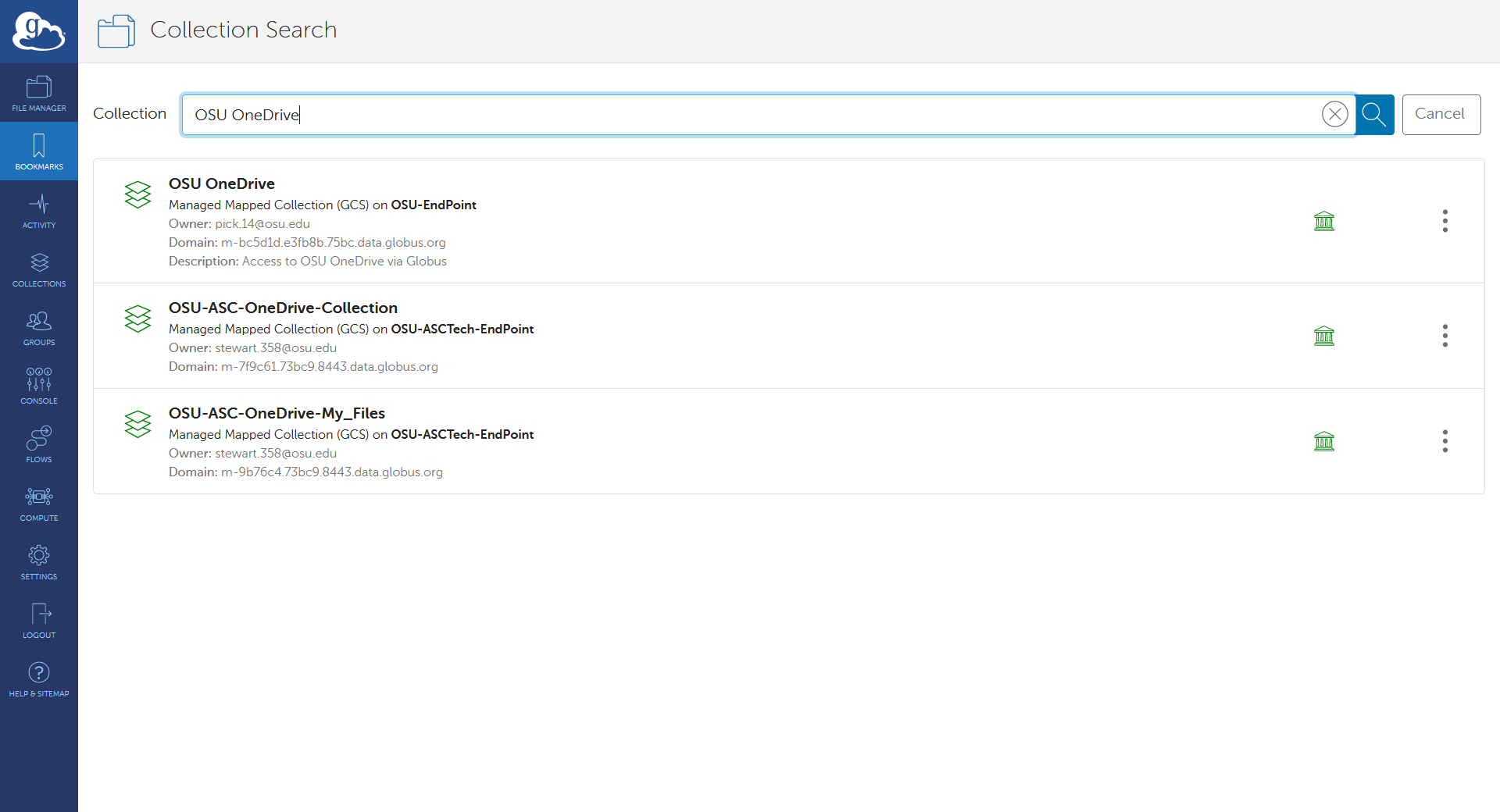

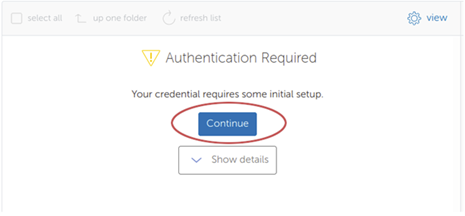

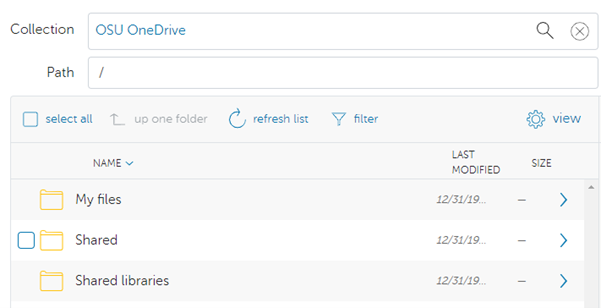

Globus

Using Globus is recommended for users that frequently need to transfer many large files/dirs.

We have documentation detailing how to connect to our OSC endpoint in Globus and how to set up a local endpoint on your machine with Globus.

Request extra storage for a project

Storage can be requested for a project that is larger than the standard offered by home directories.

On the project details page, submit a "Request Storage Change" and a ticket will be created for OSC staff to create the project space quota.

See service costs for details.

Getting started using OSC

Finally, after the above setup, you can start using OSC resources. Usually you have some setup that needs to be performed before you can really start using OSC, like creating a custom environment, gaining access to preinstalled software or installing software to your home directory that is not already available.

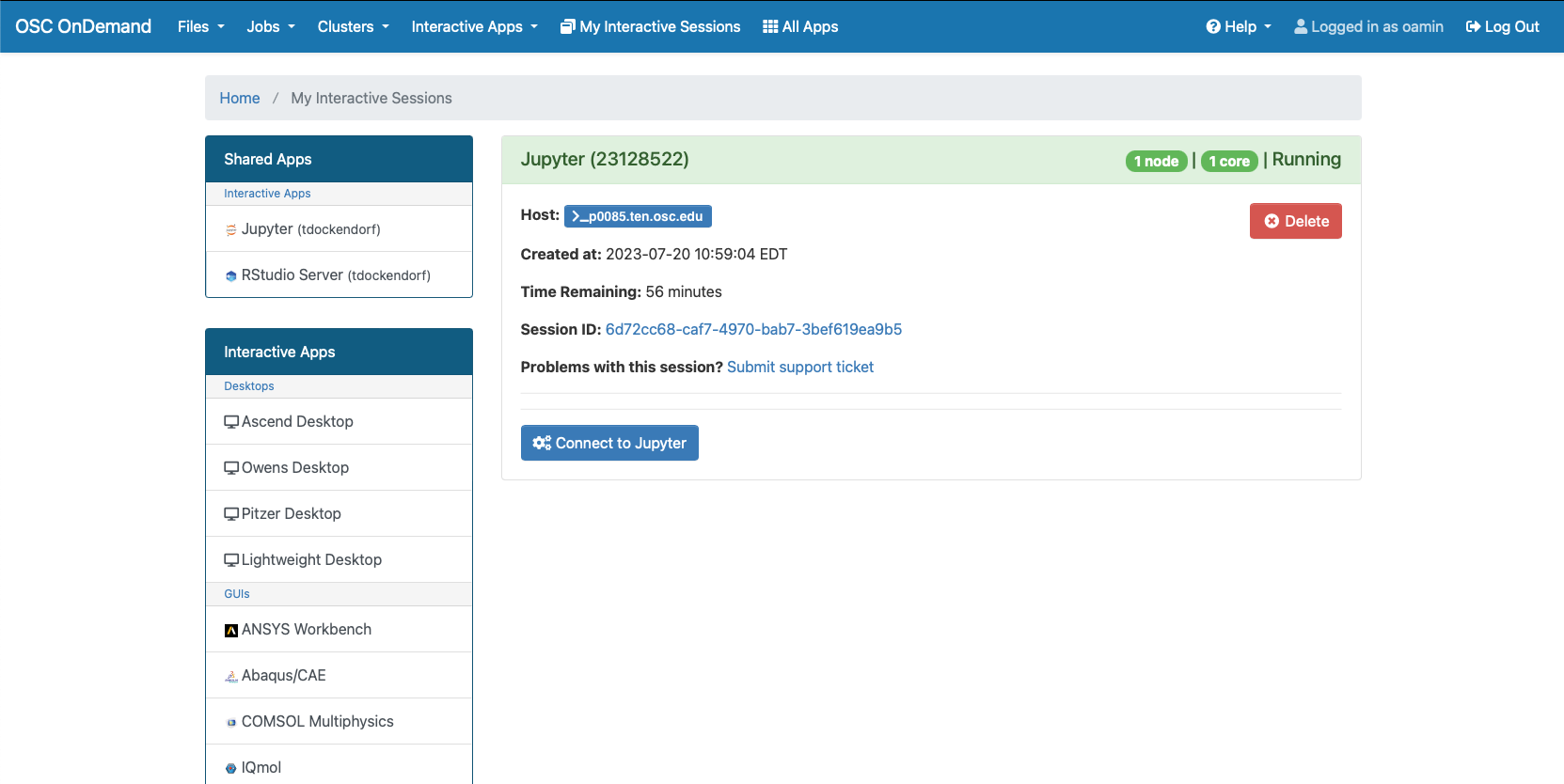

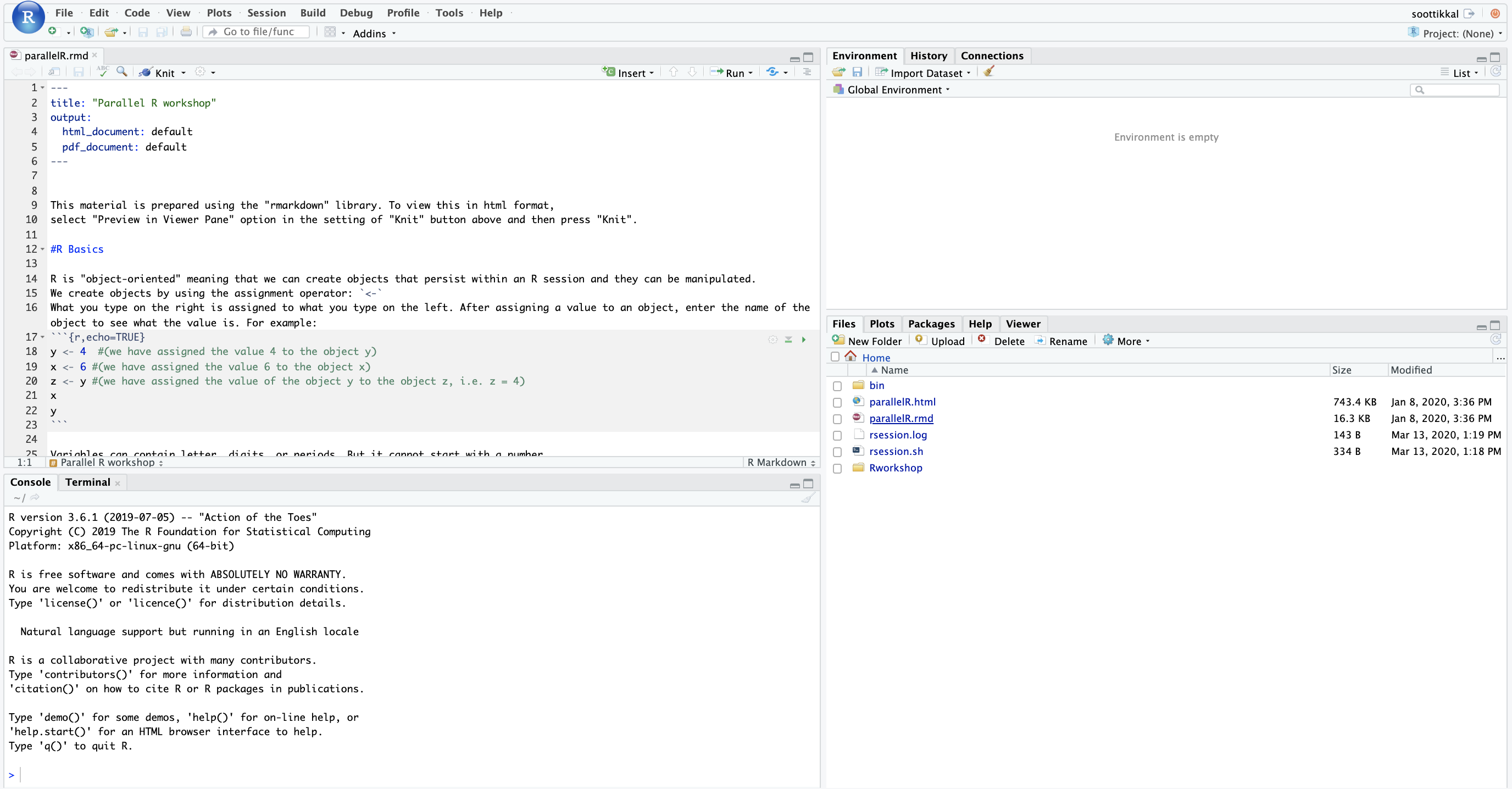

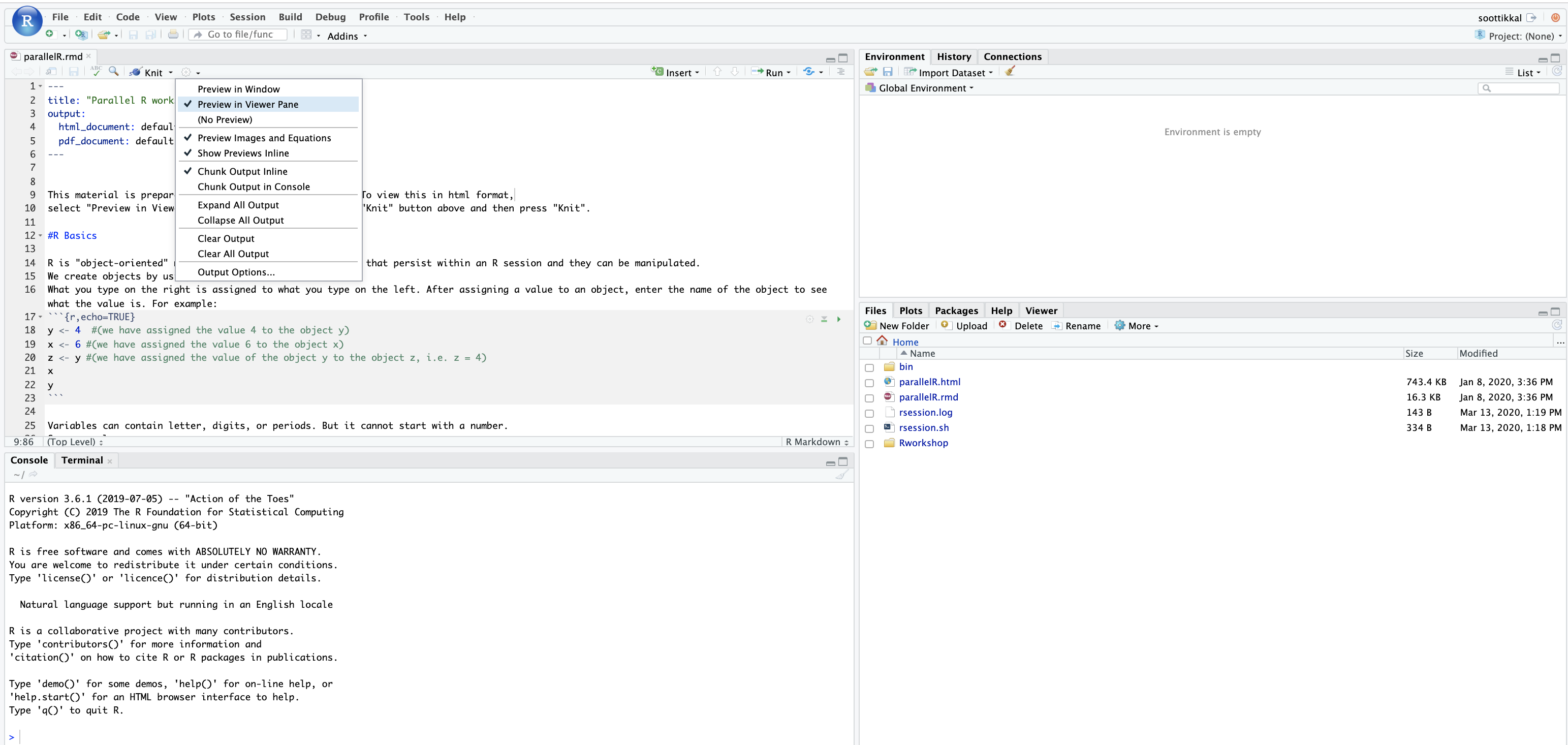

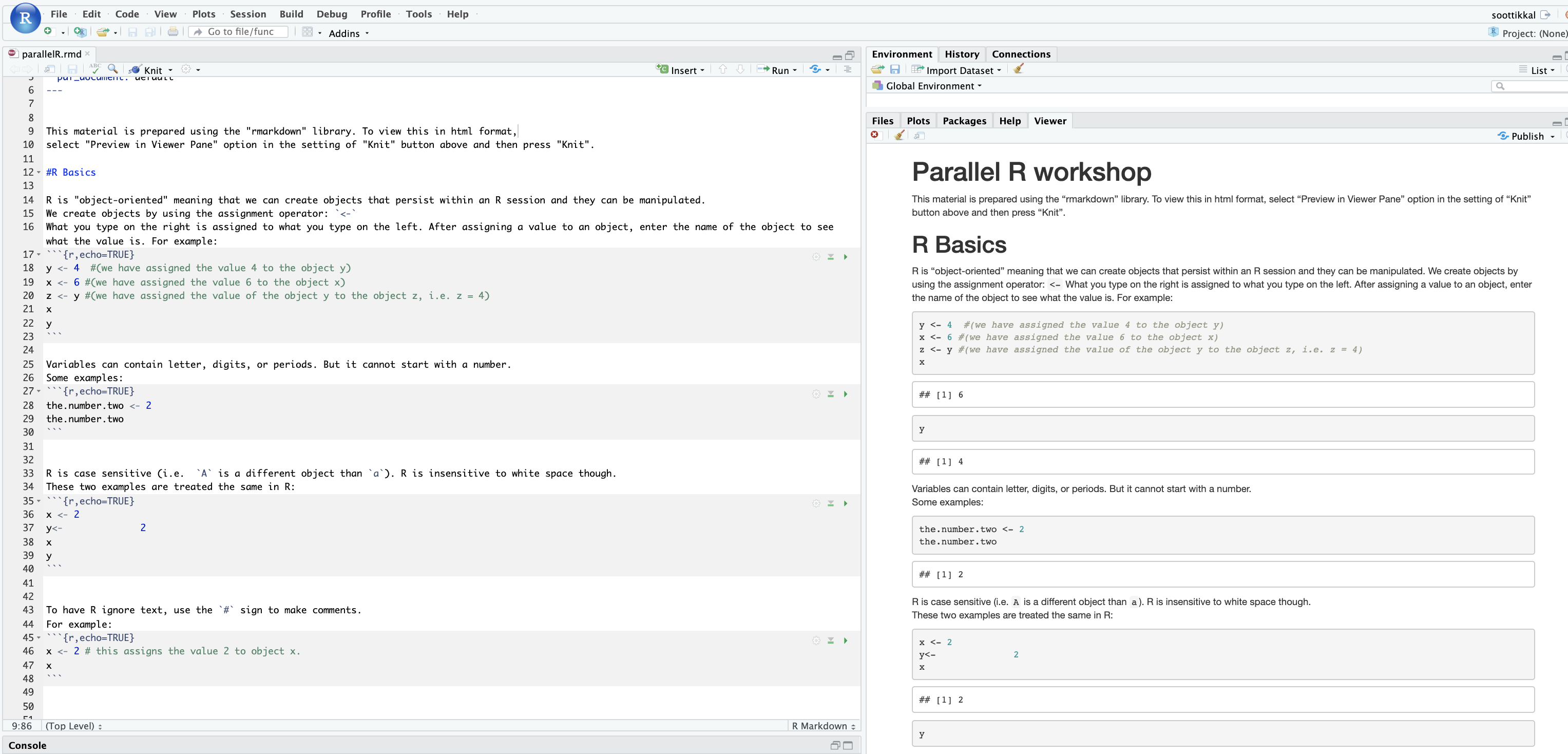

Interactive desktop session

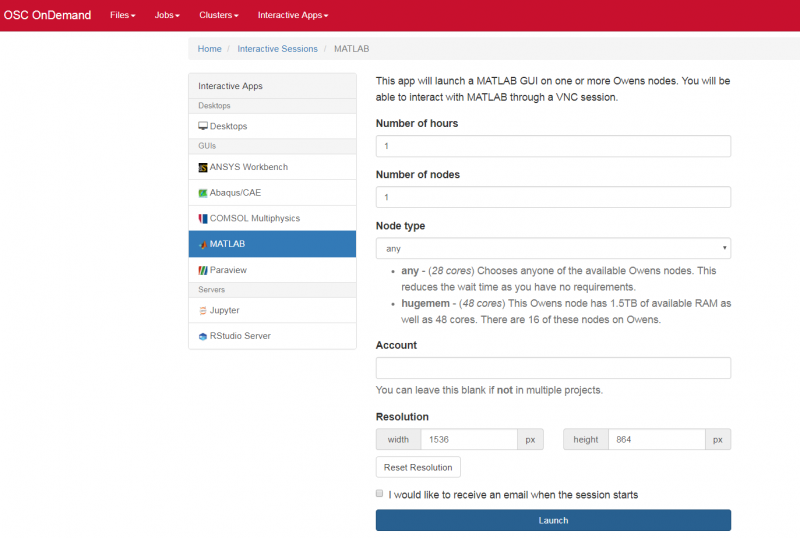

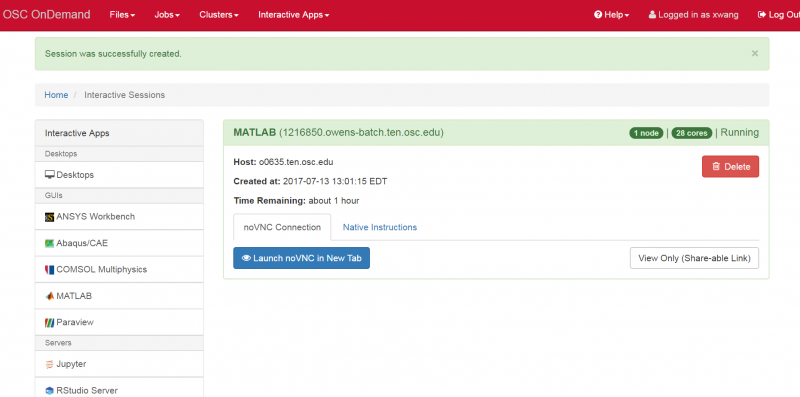

The best place to start is by visiting ondemand.osc.edu, logging in and starting an interactive desktop session.

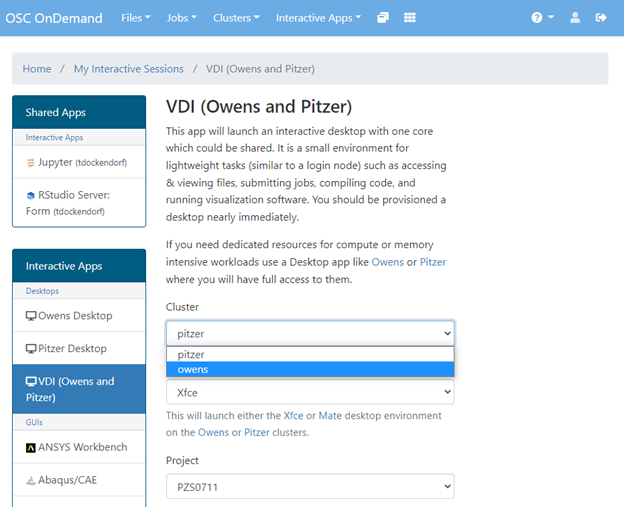

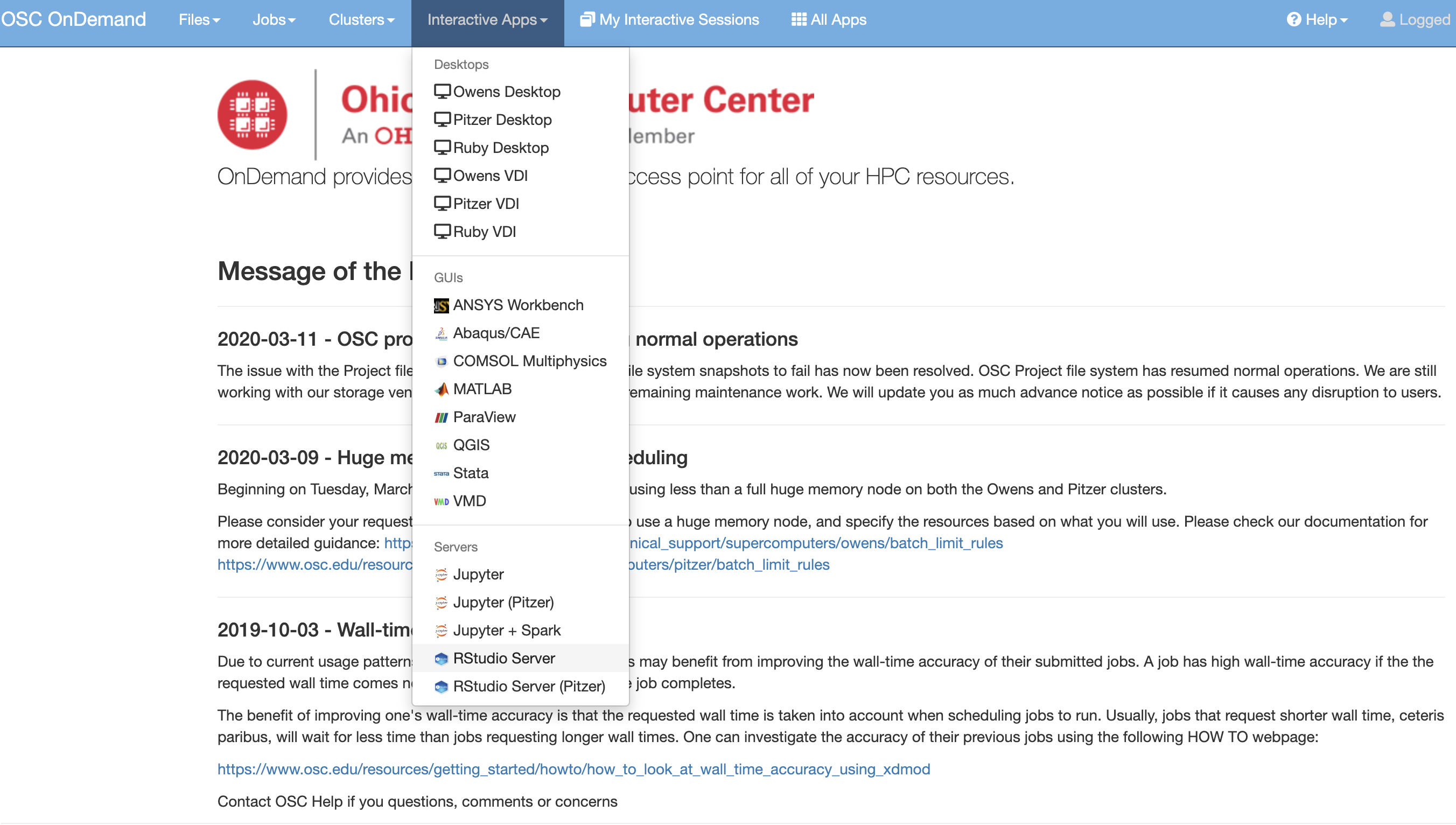

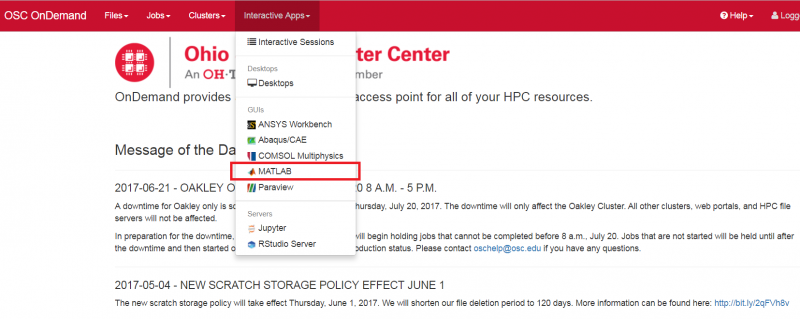

Look for the navigation bar at the top of the page and select Interactive Apps, then Owens Desktop.

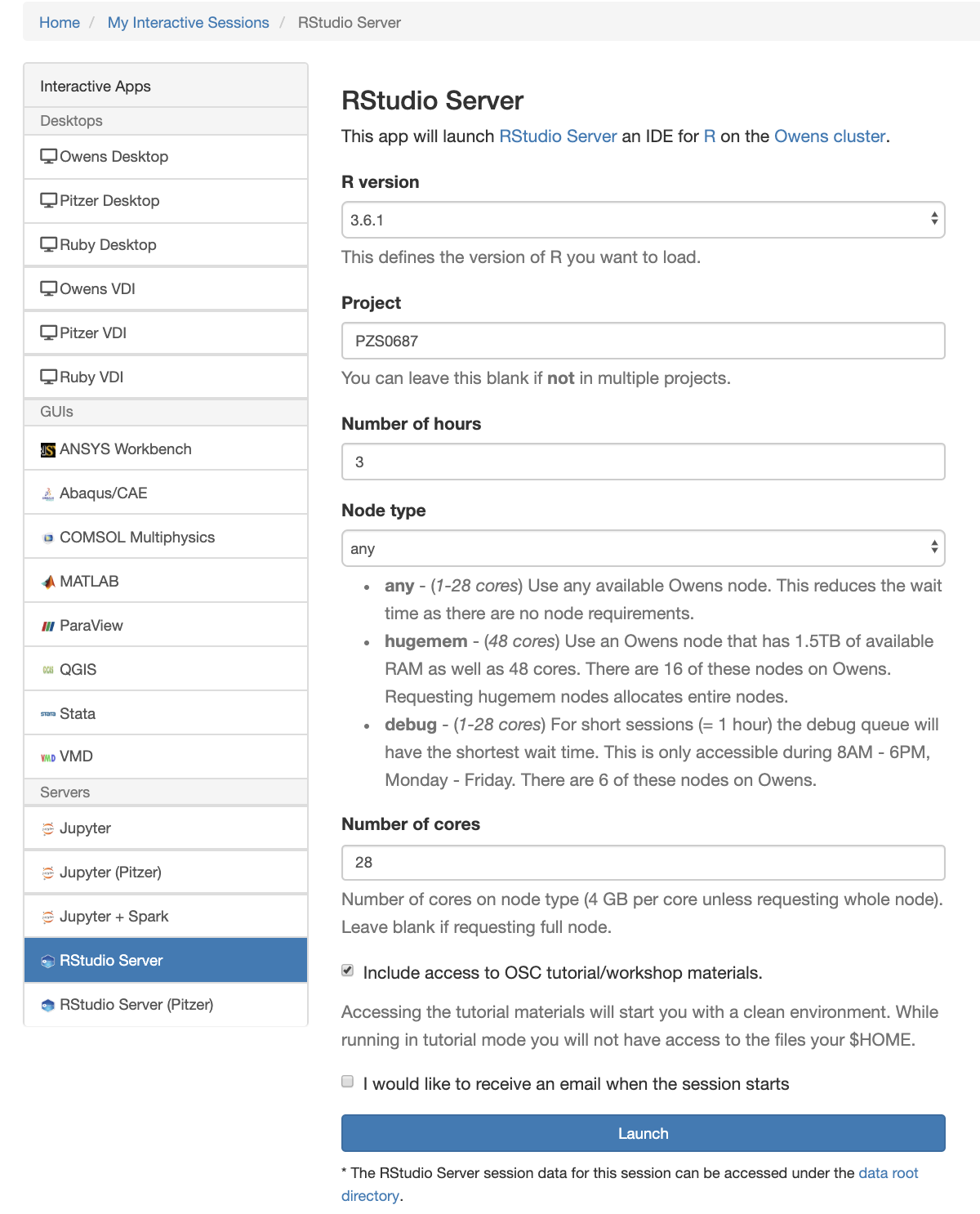

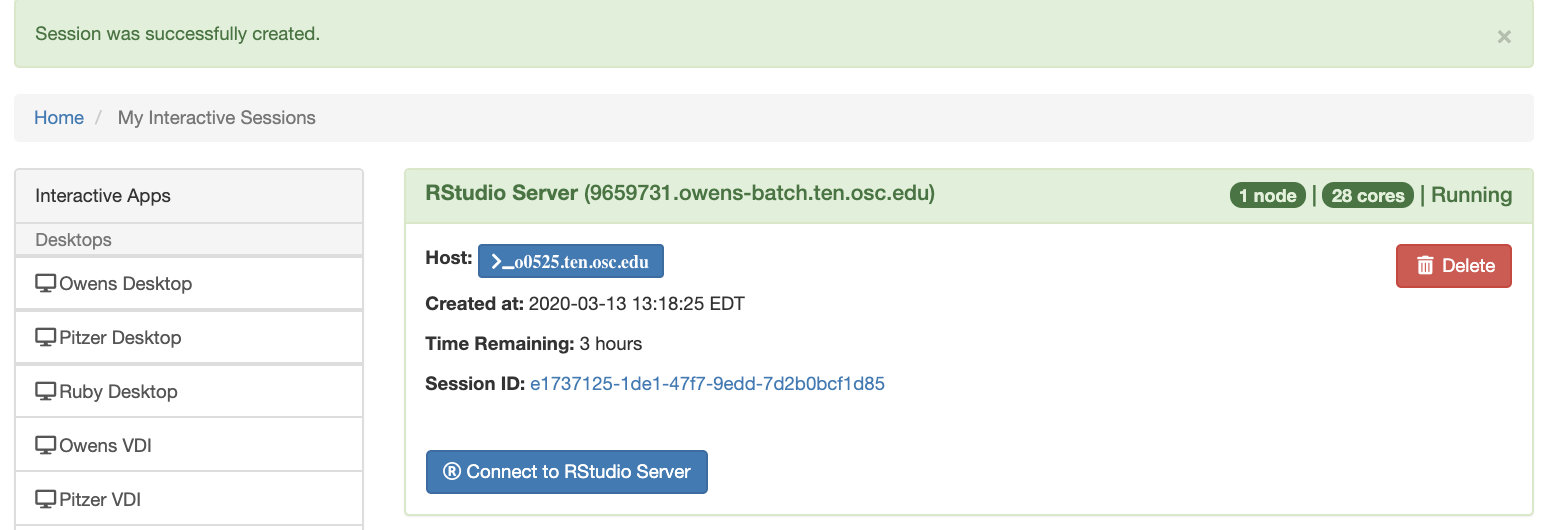

Notice that there are a lot of fields, but the most important ones, for now, are cores and the number of hours.

Try using only a single core at first, until you are more familiar with the system and can decide when more cores will be needed.

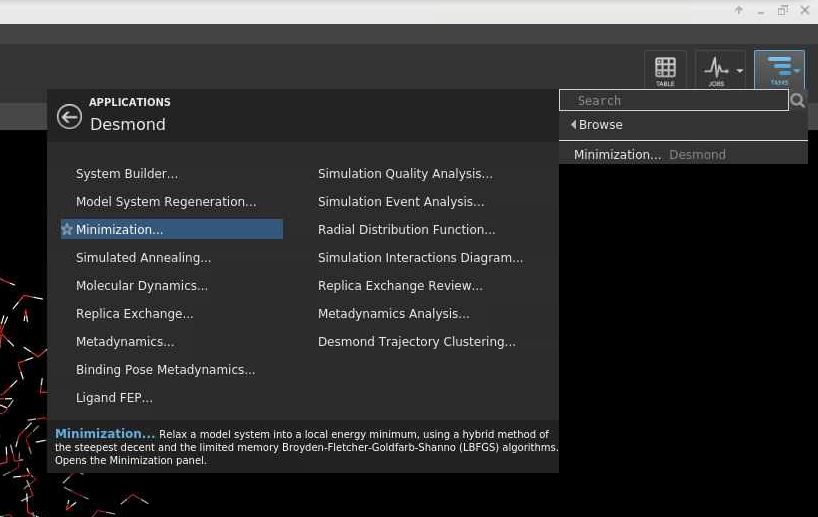

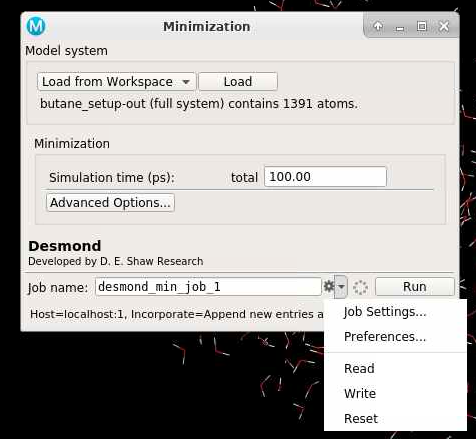

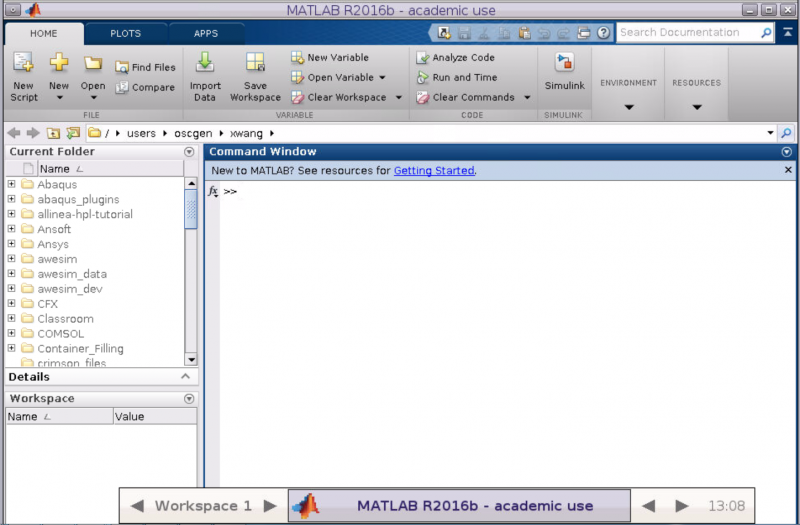

Other interactive apps

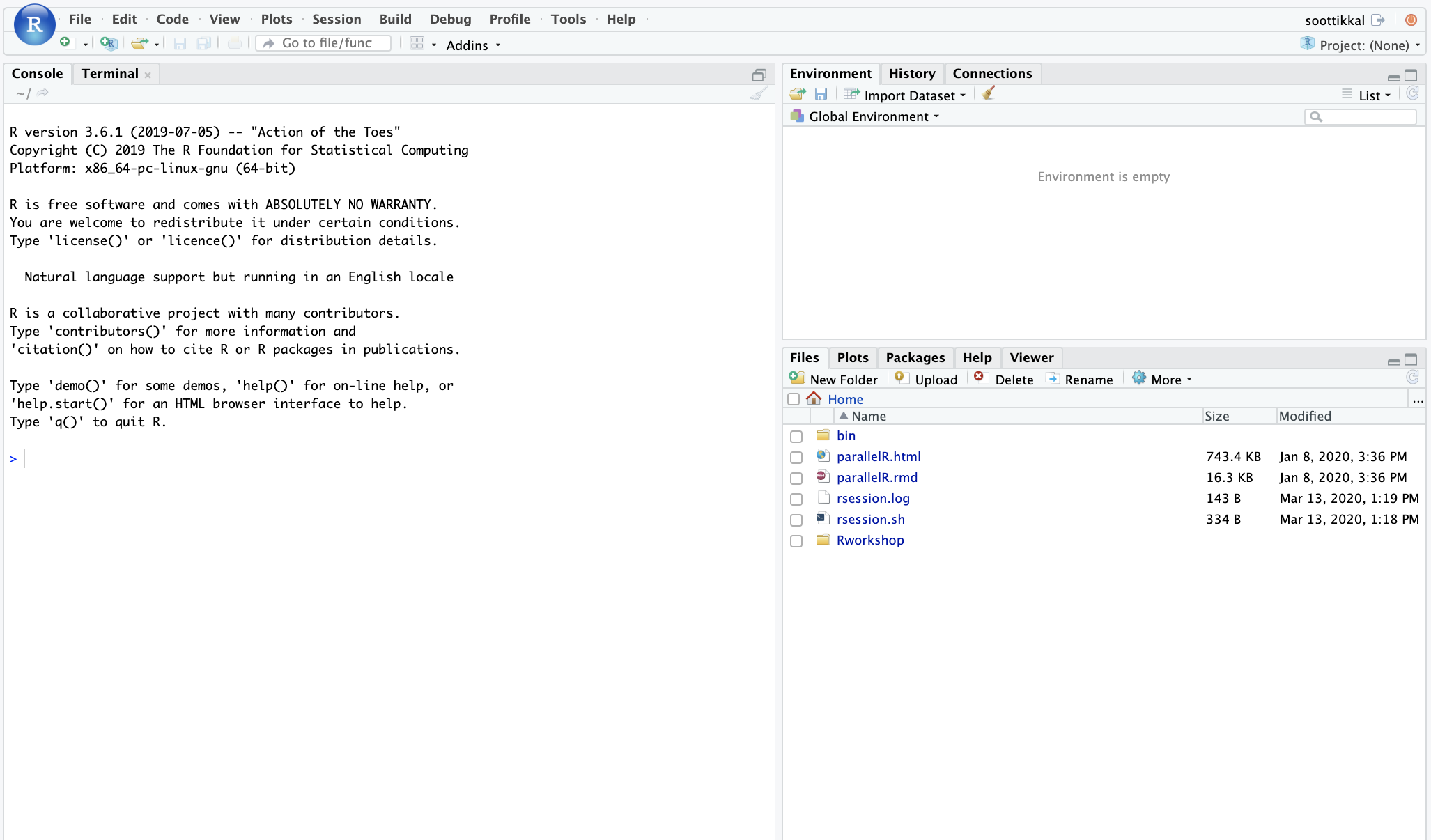

If there is specific software in the Interactive Apps list that you want to use, then go ahead and start a session with it. Just remember to change the cores to one until you understand what you need.

Getting to a terminal without starting a desktop session

A terminal session can also be started in OnDemand by clicking Clusters then Owens Shell Access.

In this terminal you can perform the needed commands in the below sections on environment setup and software use/installation.

Environment setup to install packages for different programming languages

Some of the common programming languages for which users need an environment set up are python and R.

See add python packages with conda or R software for details.

There are other options, so please browse the OSC software listing.

OSC managed software

All the software already available at OSC can be found in the software listing.

Each page has some information on how to use the software from a command line. If you are unfamiliar with the command line in Linux, then try reviewing some Linux tutorials.

For now, try to get comfortable with moving to different directories on the filesystem, creating and editing files, and using the module commands from the software pages.

Install software not provided by OSC

Software not already installed on OSC systems can be installed locally to one's home directory without admin privileges. Try reviewing locally installing software at OSC.

Batch system basics

After getting set up at OSC and understanding the use of interactive sessions, you should start looking into how to utilize the batch system to have your software run programmatically.

The benefits of the batch system are that a user can submit what we call a job (a request to reserve resources) and have the job execute from start to finish without any interaction by the user.

A good place to start is by reviewing job scripts.

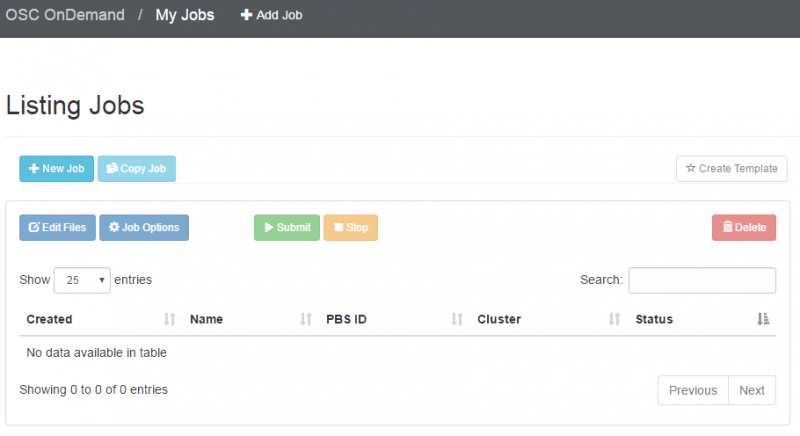

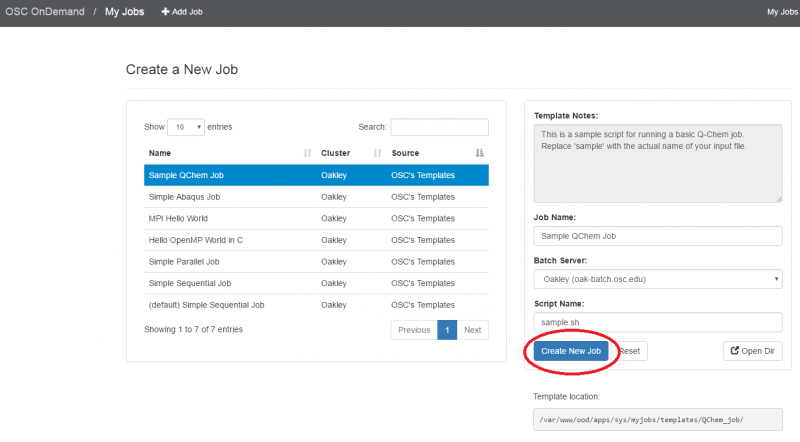

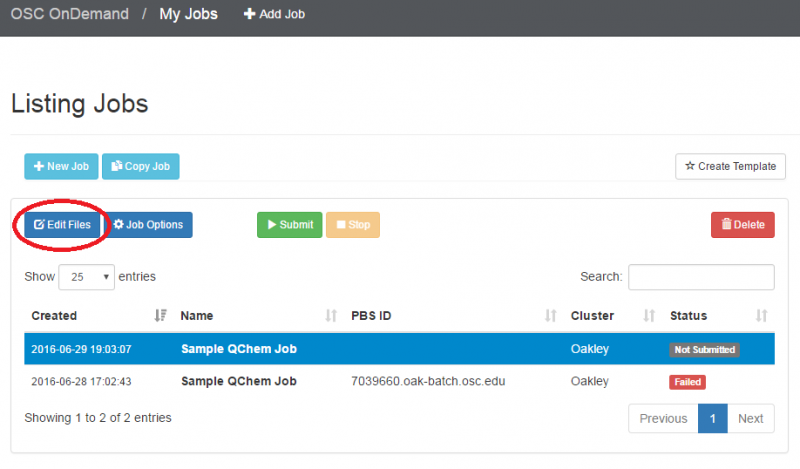

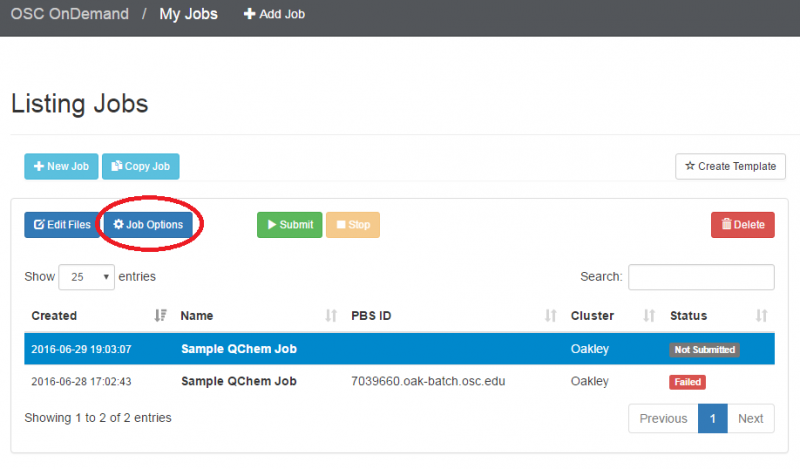

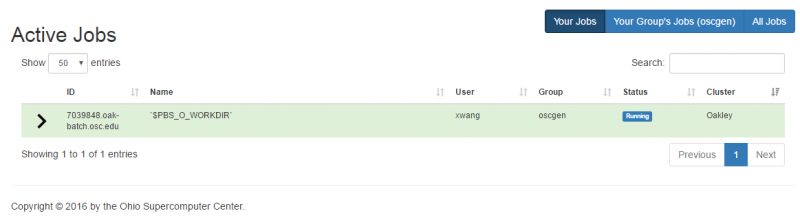

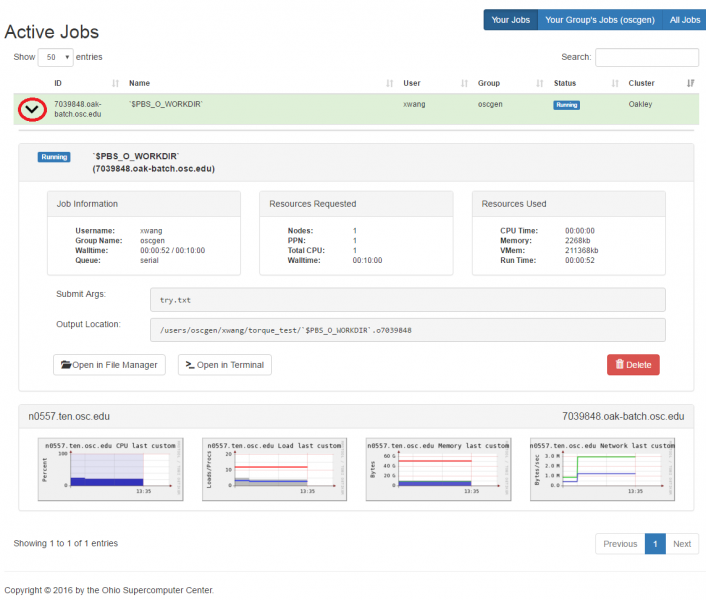

OnDemand job composer

OnDemand provides a convenient method for editing and submitting jobs in the job composer.

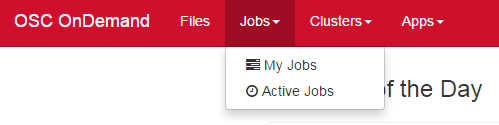

It can be used by logging into ondemand.osc.edu and clicking Jobs at the top and then Job Composer. A short help message should be shown on basic usage.

Training

OSC offers periodic training both at our facility and at universities across the state on a variety of topics. Additionally, we will partner with other organizations to enable our users to access additional training resources.

We are currently in the process of updating our training strategy and documents. If you are interested in having us come to your campus to provide training, please contact OSC Help. You can also contact us if there is a specific training need you would like to see us address.

To get an introduction to HPC, see our HPC Basics page.

To learn more about using the command line, see our UNIX Basics page.

For detailed instructions on how to perform tasks on our systems, check out HOWTO articles.

Still Need Help?

Before contacting OSC Help, please check to see if your question is answered in either the FAQ or the Knowledge Base. Many of the questions asked by both new and experienced OSC users are answered on these web pages.

If you still cannot solve your problem, please do not hesitate to contact OSC Help:

Phone: (614) 292-1800

Email: oschelp@osc.edu

Submit your issue online

Schedule virtual consultation

Basic and advanced support is available Monday through Friday, 9 a.m.– 5 p.m., except for these listed holidays.

We recommend following HPCNotices on X to get up-to-the-minute information on system outages and important operations-related updates.

HPC Basics

New! Online Training Courses

Check out our new online training courses for an introduction to OSC services. You can get more information on the OSC Training page.

Overview

HPC, or High Performance Computing, generally refers to aggregating computing resources together in order to perform more computing operations at once.

Basic definitions

- Core (processor) - A single unit that executes a single chain of instructions.

- Node - a single computer or server.

- Cluster - many nodes connected together which are able to coordinate between themselves.

HPC Workflow

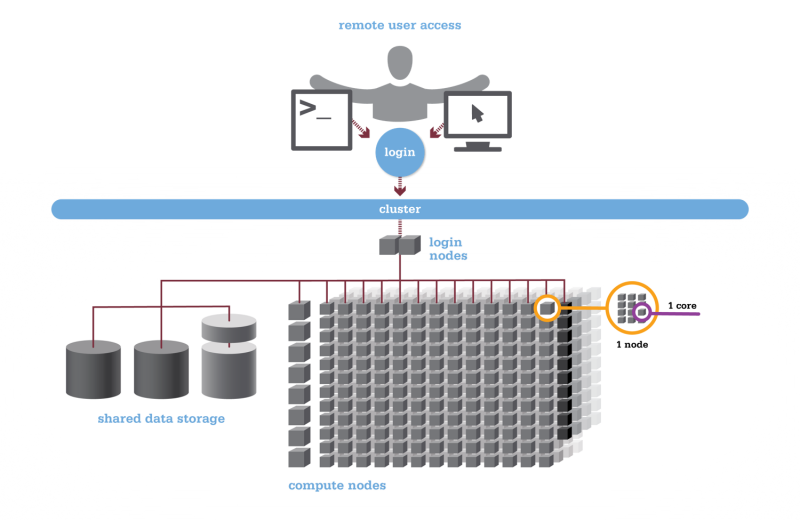

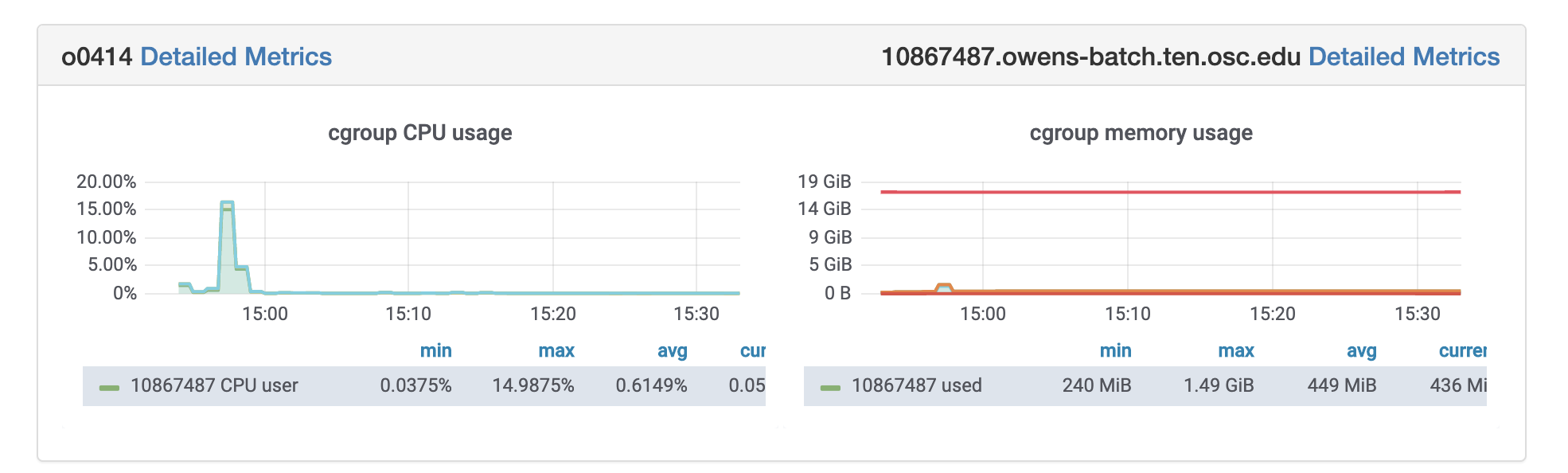

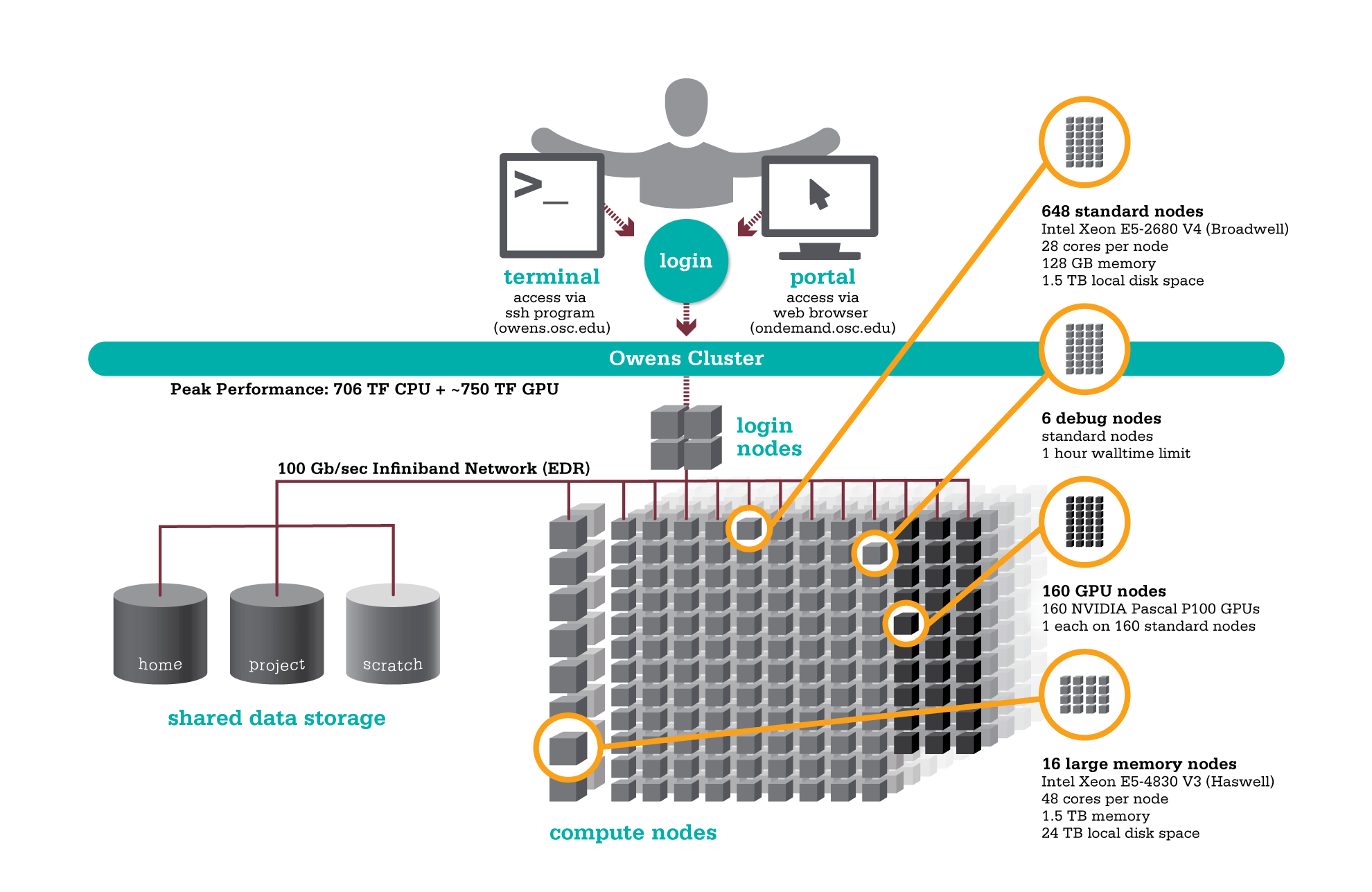

Using HPC is a little different from running programs on your desktop. When you login you’ll be connected to one of the system’s “login nodes”. These nodes serve as a staging area for you to marshal your data and submit jobs to the batch scheduler. Your job will then wait in a queue along with other researchers' jobs. Once the resources it requires become available, the batch scheduler will then run your job on a subset of our hundreds of “compute nodes”. You can see the overall structure in the diagram below.

HPC Citizenship

An important point about the diagram above is that OSC clusters are a collection of shared, finite resources. When you connect to the login nodes, you are sharing their resources (CPU cycles, memory, disk space, network bandwidth, etc.) with a few dozen other researchers. The same is true of the file servers when you access your home or project directories, and can even be true of the compute nodes.

For most day-to-day activities you should not have to worry about this, and we take precautions to limit the impact that others might have on your experience. That said, there are a few use cases that are worth watching out for:

-

The login nodes should only be used for light computation; any CPU- or memory-intensive operations should be done using the batch system. A good rule of thumb is that if you wouldn't want to run a task on your personal desktop because it would slow down other applications, you shouldn't run it on the login nodes. (See also: Interactive Jobs.)

-

I/O-intensive jobs should copy their files to fast, temporary storage, such as the local storage allocated to jobs or the Scratch parallel filesystem.

-

When running memory-intensive or potentially unstable jobs, we highly recommend requesting whole nodes. By doing so you prevent other users jobs from being impacted by your job.

-

If you request partial nodes, be sure to consider the amount of memory available per core. (See: HPC Hardware.) If you need more memory, request more cores. It is perfectly acceptable to leave cores idle in this situation; memory is just as valuable a resource as processors.

In general, we just encourage our users to remember that what you do may affect other researchers on the system. If you think something you want to do or try might interfere with the work of others, we highly recommend that you contact us at oschelp@osc.edu.

Getting Connected

There are two ways to connect to our systems. The traditional way will require you to install some software locally on your machine, including an SSH client, SFTP client, and optionally an X Windows server. The alternative is to use our zero-client web portal, OnDemand.

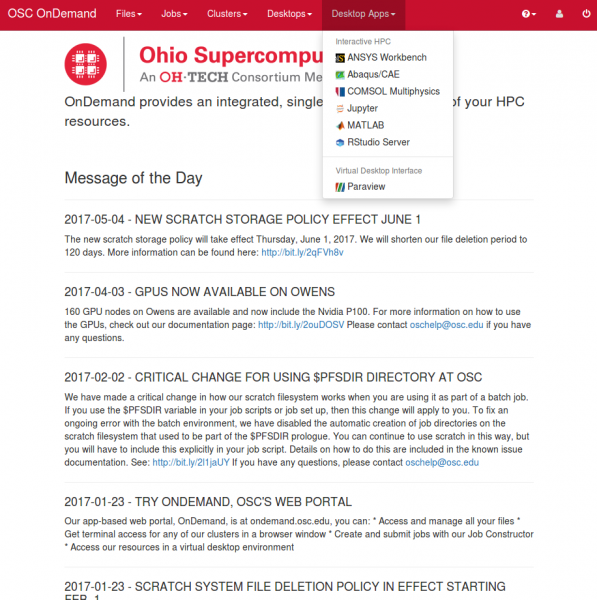

OnDemand Web Portal

OnDemand is our "one stop shop" for access to our High Performance Computing resources. With OnDemand, you can upload and download files, create, edit, submit, and monitor jobs, run GUI applications, and connect via SSH, all via a web broswer, with no client software to install and configure.

You can access OnDemand by pointing a web browser to ondemand.osc.edu. Documentation is available here. Any newer version of a common web brower should be sufficient to connect.

Using Traditional Clients

Required Software

In order to use our systems, you'll need two main pieces of software: an SFTP client and an SSH client.

SFTP ("SSH File Transfer Protocol") clients allow you transfer files between your workstation and our shared filesystem in a secure manner. We recommend the following applications:

- FileZilla: A high-performance open-source client for Windows, Linux, and OS X. A guide to using FileZilla is available here (external).

- CyberDuck: A high quality free client for Windows and OS X.

- sftp: The command-line utility sftp comes pre-installed on OS X and most Linux systems.

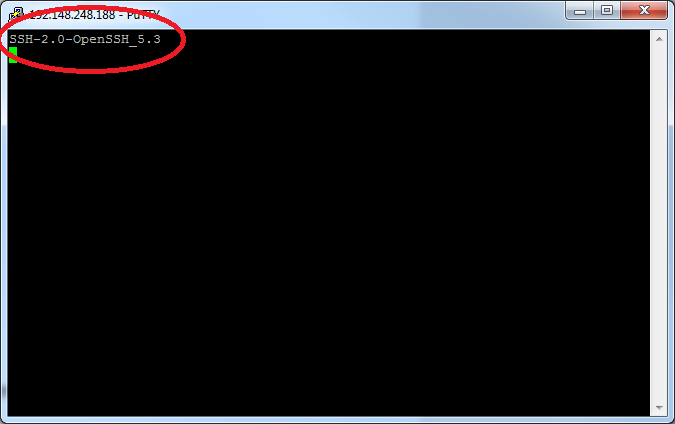

SSH ("Secure Shell") clients allow you to open a command-line-based "terminal session" with our clusters. We recommend the following options:

- PuTTY: A simple, open-source client for Windows.

- Secure Shell for Google Chrome: A free, HTML5-based SSH client for Google Chrome.

- ssh: The command-line utility ssh comes pre-installed on OS X and most Linux systems.

A third, optional piece of software you might want to install is an X Windows server, which will be necessary if you want to run graphical, windowed applications like MATLAB. We recommend the following X Windows servers:

- Xming: Xming offers a free version of their X Windows server for Microsoft Windows systems.

- X-Win32: StarNet's X-Win32 is a commercial X Windows server for Microsoft Windows systems. They offer a free, thirty-day trial.

- X11.app/XQuartz: X11.app, an Apple-supported version of the open-source XQuartz project, is freely available for OS X.

In addition, for Windows users, you can use OSC Connect, which is a native windows application developed by OSC to provide a launcher for secure file transfer, VNC, terminal, and web based services, as well as preconfigured management of secure tunnel connections. See this page for more information on OSC Connect.

Connecting via SSH

The primary way you'll interact with the OSC clusters is through the SSH terminal. See our supercomputing environments for the hostnames of our current clusters. You should not need to do anything special beyond entering the hostname.

Once you've established an SSH connection, you will be presented with some informational text about the cluster you've connected to followed by a UNIX command prompt. For a brief discussion of UNIX command prompts and what you can do with them, see the next section of this guide.

Transferring Files

To transfer files, use your preferred SFTP client to connect to:

sftp.osc.edu

You may see warning message including SSH key fingerprint. Verify that the fingerprint in the message matches one of the SSH key fingerprint listed here, then type yes.

Since process times are limited on the login nodes, trying to transfer large files directly to pitzer.osc.edu or other login nodes may terminate partway through. The sftp.osc.edu is specially configured to avoid this issue, and so we recommend it for all your file transfers.

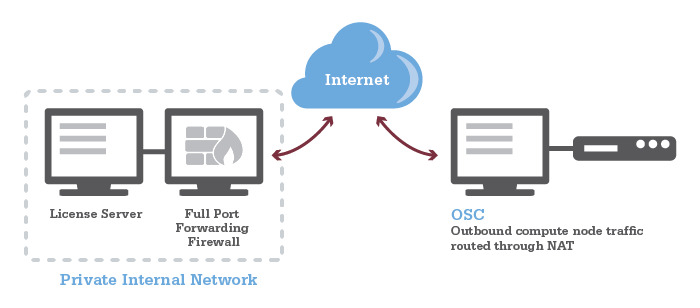

Firewall Configuration

See our Firewall and Proxy Settings page for information on how to configure your firewall to allow connection to and from OSC.

Setting up X Windows (Optional)

With an X Windows server you will be able to run graphical applications on our clusters that display on your workstation. To do this, you will need to launch your X Windows server before connecting to our systems. Then, when setting up your SSH connection, you will need to be sure to enable "X11 Forwarding".

For users of the command-line ssh client, you can do this by adding the "-X" option. For example, the below will connect to the Pitzer cluster with X11 forwarding:

$ ssh -X username@pitzer.osc.edu

If you are connecting with PuTTY, the checkbox to enable X11 forwarding can be found in the connections pane under "Connections → SSH → X11".

For other SSH clients, consult their documentation to determine how to enable X11 forwarding.

Budgets and Accounts

The Ohio Supercomputer Center provides services to clients from a variety of types of organizations. The methods for gaining access to the systems are different between Ohio academic institutions and everyone else.

Ohio academic clients

Primarily, our users are Ohio-based and academic, and the vast majority of our resources will continue to be consumed by Ohio-based academic users. See the "Ohio Academic Fee Model FAQ" section on our service costs webpage.

Other clients

Other users (business, non-Ohio academic, nonprofit, hospital, etc.) interested in using Center resources may purchase services at a set rate available on our price list. Expert consulting support is also available.

Other computing centers

For users interested in gaining access to larger resources, please contact OSC Help. We can assist you in applying for resources at an NSF or XSEDE site.

Managing an OSC project

Once a project has been created, the PI can create accounts for users by adding them through the client portal. Existing users can also be added. More information can be found on the Project Menu documentation page.

I need additional resources for my existing project and/or I received an email my allocation is exhausted

If an academic PI wants a new project or to update the budget balance on an existing project(s), please see our creating projects and budget documentation.

I wish to use OSC to support teaching a class

We provide special classroom projects for this purpose and at no cost. You may use the client portal after creating an account. The request will need to include a syllabus or a similar document.

I don't think I fit in the above categories

Please contact us in order to discuss options for using OSC resources.

Project Applications

Application if you are employed by an Ohio Academic Institution

Use of computing resources and services at OSC is subject to the Ohio Supercomputer Center (OSC) Code of Ethics for Academic Users. Ohio Academic Clients are eligible for highly subsidized access to OSC resources, with fees only accruing after a credit provided is exhausted. Clients from an Ohio academic institution that expect to use more than the credit should consult with their institution on the proper guidance for requesting approval to be charged for usage. See the academic fee structure FAQ page for more information.

Eligible principal investigators (PIs) at Ohio academic institutions are able to request projects at OSC, but should also consult with their institution before incurring charges. In order to be an eligible PI at OSC, you must be eligible to hold PI status at your college, university, or research organization administered by an Ohio academic institution (i.e., be a full-time, permanent academic researcher or tenure-track faculty member at an Ohio college or university). Students, post-doctoral fellows, visiting scientists, and others who wish to use the facilities may be authorized users on projects headed by an eligible PI. Once a PI has received their project information, he/she can manage users for the project. Principal Investigators of OSC projects are responsible for updating their authorized user list, their outside funding sources, and their publications and presentations that cite OSC. All of these tasks can be accomplished by contacting using the client portal. Please review the documentation for more information. PIs are also responsible for monitoring their project's budget (balance) and for requesting a new budget (balance) before going negative, as projects with negative balances are restricted.

OSC's online project requests through our Client Portal is part of an electronic system that leads you through the process step by step. Before you begin to fill in the application form, especially if you are new to the process, look at the academic fee structure page. You can save a partially completed project request for later use.

If you need assistance, please contact OSC Help.

Application if you are NOT employed by an Ohio Academic Institution

Researchers from businesses, non-Ohio academic, nonprofits, hospitals or other organizations (which do not need to be based in Ohio) who wish to use the OSC's resources should complete the Other Client request form available here. All clients not affiliated with and approved by an Ohio academic institution must sign a service agreement, provide a $500 deposit, and pay for resource usage per a standard price list.

Letter of Commitment for Outside Funding Proposals

OSC will provide a letter of commitment users can include with their account proposals for outside funding, such as from the Department of Energy, National Institutes of Health, National Science Foundation (limited to standard text, per NSF policy), etc. This letter details OSC's commitment to supporting research efforts of its users and the facilities and platforms we provide our users. [Note: This letter does not waive the normal OSC budget process; it merely states that OSC is willing to support such research.] The information users must provide for the letter is:

- address to which the letter should be directed (e.g. NSF, Department, mailing address)

- name of funding agency's representative

- name of the proposal, as well as names and home institutions of the Co-PIs

- budget amount per year you would apply for if you were to receive funding

- number of years of proposed research

- solicitation number

Send e-mail with your request for the commitment letter to OSC Help or submit online. We will prepare a draft for your approval and then we will send you the final PDF for your proposal submission. Please allow at least two working days for this service.

Letter of Support for Outside Funding Proposals

Letters of support may be subject to strict and specific guidelines, and may not be accepted by your funding agency.

If you need a letter of support, please see above "Letter of Commitment for Outside Funding Proposals".

Applying at NSF Centers

Researchers requiring additional computing resources should consider applying for allocations at National Science Foundation Centers. For more information, please write to oschelp@osc.edu, and your inquiry will be directed to the appropriate staff member.:

We require you cite OSC in any publications or reports that result from projects supported by our services.

UNIX Basics

OSC HPC resources use an operating system called "Linux", which is a UNIX-based operating system, first released on 5 October 1991. Linux is by a wide margin the most popular operating system choice for supercomputing, with over 90% of the Top 500 list running some variant of it. In fact, many common devices run Linux variant operating systems, including game consoles, tablets, routers, and even Android-based smartphones.

While Linux supports desktop graphical user interface configurations (as does OSC) in most cases, file manipulation will be done via the command line. Since all jobs run in batch will be non-interactive, they by definition will not allow the use of GUIs. Thus, we strongly suggest new users become comfortable with basic command-line operations, so that they can learn to write scripts to submit to the scheduler that will behave as intended. We have provided some tutorials explaining basics from moving about the file system, to extracting archives, to modifying your environment, that are available for self-paced learning.

Linux Command Line Fundamentals

This tutorial teaches you about the linux command line and shows you some useful commands. It also shows you how to get help in linux by using the man and apropos commands.

Linux Tutorial

This tutorial guides you through the process of creating and submitting a batch script on one of our compute clusters. This is a linux tutorial which uses batch scripting as an example, not a tutorial on writing batch scripts. The primary goal is not to teach you about batch scripting, but for you to become familiar with certain linux commands that can be used either in a batch script or at the command line. There are other pages on the OSC web site that go into the details of submitting a job with a batch script.

Linux Shortcuts

This tutorial shows you some handy time-saving shortcuts in linux. Once you have a good understanding of how the command line works, you will want to learn how to work more efficiently.

Tar Tutorial

This tutorial shows you how to download tar (tape archive) files from the internet and how to deal with large directory trees of files.

Linux Command Line Fundamentals

Description

This tutorial teaches you about the linux command line and shows you some useful commands. It also shows you how to get help in linux by using the man and apropos commands.

For more training and practice using the command line, you can find many great tutorials. Here are a few:

https://www.learnenough.com/command-line-tutorial

https://cvw.cac.cornell.edu/Linux/

http://www.ee.surrey.ac.uk/Teaching/Unix/

https://www.udacity.com/course/linux-command-line-basics--ud595

More Advanced:

http://moo.nac.uci.edu/~hjm/How_Programs_Work_On_Linux.html

Prerequisites

None.

Introduction

Unix is an operating system that comes with several application programs. Other examples of operating systems are Microsoft Windows, Apple OS and Google's Android. An operating system is the program running on a computer (or a smartphone) that allows the user to interact with the machine -- to manage files and folders, perform queries and launch applications. In graphical operating systems, like Windows, you interact with the machine mainly with the mouse. You click on icons or make selections from the menus. The Unix that runs on OSC clusters gives you a command line interface. That is, the way you tell the operating system what you want to do is by typing a command at the prompt and hitting return. To create a new folder you type mkdir. To copy a file from one folder to another, you type cp. And to launch an application program, say the editor emacs, you type the name of the application. While this may seem old-fashioned, you will find that once you master some simple concepts and commands you are able to do what you need to do efficiently and that you have enough flexibility to customize the processes that you use on OSC clusters to suit your needs.

Common Tasks on OSC Clusters

What are some common tasks you will perform on OSC clusters? Probably the most common scenario is that you want to run some of the software we have installed on our clusters. You may have your own input files that will be processed by an application program. The application may generate output files which you need to organize. You will probably have to create a job script so that you can execute the application in batch mode. To perform these tasks, you need to develop a few different skills. Another possibility is that you are not just a user of the software installed on our clusters but a developer of your own software -- or maybe you are making some modifications to an application program so you need to be able to build the modified version and run it. In this scenario you need many of the same skills plus some others. This tutorial shows you the basics of working with the Unix command line. Other tutorials go into more depth to help you learn more advanced skills.

The Kernel and the Shell

You can think of Unix as consisting of two parts -- the kernel and the shell. The kernel is the guts of the Unix operating system -- the core software running on a machine that performs the infrastructure tasks like making sure multiple users can work at the same time. You don't need to know anything about the kernel for the purposes of this tutorial. The shell is the program that interprets the commands you enter at the command prompt. There are several different flavors of Unix shells -- Bourne, Korn, Cshell, TCshell and Bash. There are some differences in how you do things in the different shells, but they are not major and they shouldn't show up in this tutorial. However, in the interest of simplicity, this tutorial will assume you are using the Bash shell. This is the default shell for OSC users. Unless you do something to change that, you will be running the Bash shell when you log onto Owens or Pitzer.

The Command Prompt

The first thing you need to do is log onto one of the OSC clusters, Owens or Pitzer. If you do not know how to do this, you can find help at the OSC home page. If you are connecting from a Windows system, you need to download and setup the OSC Starter Kit which you can find here. If you are connecting from a Mac or Linux system, you will use ssh. To get more information about using ssh, go to the OSC home page, hold your cursor over the "Supercomputing" menu in the main blue menu bar and select "FAQ." This should help you get started. Once you are logged in look for the last thing displayed in the terminal window. It should be something like

-bash-3.2$

with a block cursor after it. This is the command prompt -- it's where you will see the commands you type in echoed to the screen. In this tutorial, we will abbreviate the command prompt with just the dollar sign - $. The first thing you will want to know is how to log off. You can log off of the cluster by typing "exit" then typing the <Enter> key at the command prompt:

$ exit <Enter>

For the rest of this tutorial, when commands are shown, the <Enter> will be omitted, but you must always enter <Enter> to tell the shell to execute the command you just typed.

First Simple Commands

So let's try typing a few commands at the prompt (remember to type the <Enter> key after the command):

$ date $ cal $ finger $ who $ whoami $ finger -l

That last command is finger followed by a space then a minus sign then the lower case L. Is it obvious what these commands do? Shortly you will learn how to get information about what each command does and how you can make it behave in different ways. You should notice the difference between "finger" and "finger -l" -- these two commands seem to do similar things (they give information about the users who are logged in to the system) but they print the information in different formats. try the two commands again and examine the output. Note that you can use the scroll bar on your terminal window to look at text that has scrolled off the screen.

man

The "man" command is how you find out information about what a command does. Type the following command:

$ man

It's kind of a smart-alecky answer you get back, but at least you learn that "man" is short for "manual" and that the purpose is to print the manual page for a command. Before we start looking at manual pages, you need to know something about the way Unix displays them. It does not just print the manual page and return you to the command prompt -- it puts you into a mode where you are interactively viewing the manual page. At the bottom of the page you should see a colon (:) instead of the usual command prompt (-bash-3.2$). You can move around in the man page by typing things at the colon. To exit the man page, you need to type a "q" followed by <Enter>. So try that first. Type

$ man finger

then at the colon of the man page type

: q

You do not have to type <Enter> after the "q" (this is different from the shell prompt.) You should be back at the shell prompt now. Now let's go through the man page a bit. Once again, type

$ man finger

Now instead of just quitting, let's look at the contents of the man page. The entire man page is probably not displayed in your terminal. To scroll up or down, use the arrow keys or the <Page Up> and <Page Down> keys of the keyboard. The <Enter> and <Space> keys also scroll. Remember that "q" will quit out of the man page and get you back to the shell prompt.

The first thing you see is a section with the heading "NAME" which displays the name of the command and a short summary of what it does. Then there is a section called "SYNOPSIS" which shows the syntax of the command. In this case you should see

SYNOPSIS finger [-lmsp] [user ...] [user@host ...]

Remember how "finger" and "finger -l" gave different output? The [-lmsp] tells you that you can use one of those four letters as a command option -- i.e., a way of modifying the way the command works. In the "DESCRIPTION" section of the man page you will see a longer description of the command and an explanation of the options. Anything shown in the command synopsis which is contained within square brackets ([ ]) is optional. That's why it is ok to type "finger" with no options and no user. What about "user" -- what is that? To see what that means, quit out of the man page and type the following at the command prompt:

$ whoami

Let's say your username is osu0000. Then the result of the "whoami" command is osu0000. Now enter the following command (but replace osu0000 with your username):

$ finger osu0000

You should get information about yourself and no other users. You can also enter any of the usernames that are output when you enter the "finger" command by itself. The user names are in the leftmost column of output. Now try

$ finger -l osu0000 $ finger -lp osu0000 $ finger -s osu0000 osu0001

For the last command, use your username and the username of some other username that shows up in the output of the "finger" command with no arguments.

Note that a unix command consists of three parts:

- command

- option(s)

- argument(s)

You don't necessarily have to enter an argument (as you saw with the "finger" command) but sometimes a command makes no sense without an argument so you must enter one -- you saw this with the "man" command. Try typing

$ man man

and looking briefly at the output. One thing to notice is the synopsis -- there are a lot of possible options for the "man" command, but the last thing shown in the command synopsis is "name ..." -- notice that "name" is not contained in square brackets. This is because it is not optional -- you must enter at least one name. What happens if you enter two names?

$ man man finger

The first thing that happens is you get the man page for the "man" command. What happens when you quit out of the man page? You should now get the man page for the "finger" command. If you quit out of this one you will be back at the shell prompt.

Combining Commands

You can "pipe" the output of one command to another. First, let's learn about the "more" command:

$ man more

Read the "DESCRIPTION" section -- it says that more is used to page through text that doesn't fit on one screen. It also recommends that the "less" command is more powerful. Ok, so let's learn about the "less" command:

$ man less

You see from the description that "less" also allows you to examine text one screenful at a time. Does this sound familiar? The "man" command actually uses the "less" command to display its output. But you can use the "less" command yourself. If you have a long text file named "foo.txt" you could type

$ less foo.txt

and you would be able to examine the contents of the file one screen at a time. But you can also use "less" to help you look at the output of a command that prints more than one screenful of output. Try this:

$ finger | less

That's "finger" followed by a space followed by the vertical bar (shifted backslash on most keyboards) followed by a space followed by "less" followed by <Enter>. You should now be looking at the output of the "finger" command in an interactive fashion, just as you were looking at man pages. Remember, to scroll use the arrow keys, the <Page Up> and <Page Down> keys, the <Enter> key or the space bar; and to quit, type "q".

Now try the following (but remember to replace "osu0000" with your actual username):

$ finger | grep osu0000

The "grep" command is Unix's command for searching. Here you are telling Unix to search the output of the "finger" command for the text "osu0000" (or whatever your username is.)

If you try to pipe the output of one command to a second command and the second is a command which works with no arguments, you won't get what you expect. Try

$ whoami | finger

You see that it does not give the same output as

$ finger osu0000

(assuming "whoami" returns osu0000.)

In this case what you can do is the following:

$ finger `whoami`

That's "finger" space backquote "whoami" backquote. The backquote key is to the left of the number 1 key on a standard keyboard.

apropos

Enter the following command:

$ man apropos

As you can see, the apropos searches descriptions of commands and finds commands whose descriptions match the keyword you entered as the argument. That means it outputs a list of commands that have something to do with the keyword you entered. Try this

$ apropos

Ok, you need to enter an argument for the "apropos" command.

So try

$ apropos calendar

Now you see that among the results are two commands -- "cal" and "difftime" that have something to do with the keyword "calendar."

Linux Tutorial

Description

This tutorial guides you through the process of creating and submitting a batch script on one of our compute clusters. This is a linux tutorial which uses batch scripting as an example, not a tutorial on writing batch scripts. The primary goal is not to teach you about batch scripting, but for you to become familiar with certain linux commands. There are other pages on the OSC web site that go into the details of submitting a job with a batch script.

Prerequisites

- Familiarity with a text editor (emacs, nano, vim)

- Basic understanding of the unix command line

- Linux Command Line Fundamentals tutorial

Goals

- Create subdirectories to organize information

- Create a batch script with a text editor

- Submit a job

- Check on the progress of the job

- Change the permissions of the output files

- Get familiar with some common unix commands

Step 1 - Organize your directories

When you first log in to our clusters, you are in your home directory. For the purposes of this illustration, we will pretend you are user osu0001 and your project code is PRJ0001, but when you try out commands you must use your own username and project code.

$ pwd /users/PRJ0001/osu0001

$ touch foo1 $ touch foo2 $ ls $ ls -l $ ls -lt $ ls -ltr

touch" command just creates an empty file with the name you give it.-l", "-lt" or "-ltr"? You noticed the difference in the output between just the "ls" command and the "ls -l" command.-" (minus sign) followed by a single letter. "ls -ltr" is actually specifying three options to the ls command.l: I want to see the output in long format -- one file per line with some interesting information about each filet: sort the display of files by when they were last modified, most-recently modified firstr: reverse the order of display (combined with -t this displays the most-recently modified file last -- it should be BatchTutorial in this case.)ls -ltr" because I find it convenient to see the most recently modified file at the end of the list.$ mkdir BatchTutorial $ ls -ltr

mkdir" command makes a new directory with the name you give it. This is a subfolder of the current working directory. The current working directory is where your current focus is in the hierarchy of directories. The 'pwd' command shows you are in your home directory:$ pwd /users/PRJ0001/osu0001

$ cd BatchTutorial $ pwd

pwd' now? "cd" is short for "change directory" -- think of it as moving you into a different place in the hierarchy of directories. Now do$ cd .. $ pwd

Step 2 -- Get familiar with some more unix commands

Try the following:

$ echo where am I? $ echo I am in `pwd` $ echo my home directory is $HOME $ echo HOME $ echo this directory contains `ls -l`

These examples show what the echo command does and how to do some interesting things with it. The `pwd` means the result of issuing the command pwd. HOME is an example of an environment variable. These are strings that stand for other strings. HOME is defined when you log in to a unix system. $HOME means the string the variable HOME stands for. Notice that the result of "echo HOME" does not do the substitution. Also notice that the last example shows things don't always get formatted the way you would like.

Some more commands to try:

$ cal $ cal > foo3 $ cat foo3 $ whoami $ date

Using the ">" after a command puts the output of the command into a file with the name you specify. The "cat" command prints the contents of a file to the screen.

Two very important UNIX commands are the cp and mv commands. Assume you have a file called foo3 in your current directory created by the "cal > foo3" command. Suppose you want to make a copy of foo3 called foo4. You would do this with the following command:

$ cp foo3 foo4 $ ls -ltr

Now suppose you want to rename the file 'foo4' to 'foo5'. You do this with:

$ mv foo4 foo5 $ ls -ltr

'mv' is short for 'move' and it is used for renaming files. It can also be used to move a file to a different directory.

$ mkdir CalDir $ mv foo5 CalDir $ ls $ ls CalDir

Notice that if you give a directory with the "ls" command is shows you what is in that directory rather than the current working directory.

Now try the following:

$ ls CalDir $ cd CalDir $ ls $ cd .. $ cp foo3 CalDir $ ls CalDir

Notice that you can use the "cp" command to copy a file to a different directory -- the copy will have the same name as the original file. What if you forget to do the mkdir first?

$ cp foo3 FooDir

Now what happens when you do the following:

$ ls FooDir $ cd FooDir $ cat CalDir $ cat FooDir $ ls -ltr

CalDir is a directory, but FooDir is a regular file. You can tell this by the "d" that shows up in the string of letters when you do the "ls -ltr". That's what happens when you try to cp or mv a file to a directory that doesn't exist -- a file gets created with the target name. You can imagine a scenario in which you run a program and want to copy the resulting files to a directory called Output but you forget to create the directory first -- this is a fairly common mistake.

Step 3 -- Environment Variables

Before we move on to creating a batch script, you need to know more about environment variables. An environment variable is a word that stands for some other text. We have already seen an example of this with the variable HOME. Try this:

$ MY_ENV_VAR="something I would rather not type over and over" $ echo MY_ENV_VAR $ echo $MY_ENV_VAR $ echo "MY_ENV_VAR stands for $MY_ENV_VAR"

You define an environment variable by assigning some text to it with the equals sign. That's what the first line above does. When you use '$' followed by the name of your environment variable in a command line, UNIX makes the substitution. If you forget the '$' the substitution will not be made.

There are some environment variables that come pre-defined when you log in. Try using 'echo' to see the values of the following variables: HOME, HOSTNAME, SHELL, TERM, PATH.

Now you are ready to use some of this unix knowledge to create and run a script.

Step 4 -- Create and run a script

Before we create a batch script and submit it to a compute node, we will do something a bit simpler. We will create a regular script file that will be run on the login node. A script is just a file that consists of unix commands that will run when you execute the script file. It is a way of gathering together a bunch of commands that you want to execute all at once. You can do some very powerful things with scripting to automate tasks that are tedious to do by hand, but we are just going to create a script that contains a few commands we could easily type in. This is to help you understand what is happening when you submit a batch script to run on a compute node.

Use a text editor to create a file named "tutorial.sh" which contains the following text (note that with emacs or nano you can use the mouse to select text and then paste it into the editor with the middle mouse button):

$ nano tutorial.sh

echo ----

echo Job started at `date`

echo ----

echo This job is working on node `hostname`

SH_WORKDIR=`pwd`

echo working directory is $SH_WORKDIR

echo ----

echo The contents of $SH_WORKDIR

ls -ltr

echo

echo ----

echo

echo creating a file in SH_WORKDIR

whoami > whoami-sh-workdir

SH_TMPDIR=${SH_WORKDIR}/sh-temp

mkdir $SH_TMPDIR

cd $SH_TMPDIR

echo ----

echo TMPDIR IS `pwd`

echo ----

echo wait for 12 seconds

sleep 12

echo ----

echo creating a file in SH_TMPDIR

whoami > whoami-sh-tmpdir

# copy the file back to the output subdirectory

cp ${SH_TMPDIR}/whoami-sh-tmpdir ${SH_WORKDIR}/output

cd $SH_WORKDIR

echo ----

echo Job ended at `date`

To run it:

$ chmod u+x tutorial.sh $ ./tutorial.sh

Look at the output created on the screen and the changes in your directory to see what the script did.

Step 5 -- Create and run a batch job

Use your favorite text editor to create a file called tutorial.pbs in the BatchTutorial directory which has the following contents (remember, you can use the mouse to cut and paste text):

#PBS -l walltime=00:02:00 #PBS -l nodes=1:ppn=1 #PBS -N foobar #PBS -j oe #PBS -r n echo ---- echo Job started at `date` echo ---- echo This job is working on compute node `cat $PBS_NODEFILE` cd $PBS_O_WORKDIR echo show what PBS_O_WORKDIR is echo PBS_O_WORKDIR IS `pwd` echo ---- echo The contents of PBS_O_WORKDIR: ls -ltr echo echo ---- echo echo creating a file in PBS_O_WORKDIR whoami > whoami-pbs-o-workdir cd $TMPDIR echo ---- echo TMPDIR IS `pwd` echo ---- echo wait for 42 seconds sleep 42 echo ---- echo creating a file in TMPDIR whoami > whoami-tmpdir # copy the file back to the output subdirectory pbsdcp -g $TMPDIR/whoami-tmpdir $PBS_O_WORKDIR/output echo ---- echo Job ended at `date`

$ qsub tutorial.pbs

qstat -u [username] to check on the progress of your job. If you see something like this

$ qstat -u osu0001

Req'd Req'd Elap

Job ID Username Queue Jobname SessID NDS TSK Memory Time S Time

------------------ ----------- -------- ---------------- ------ ----- ------ ------ ----- - -----

458842.oak-batch osu0001 serial foobar -- 1 1 -- 00:02 Q --

If you see something like this:

Req'd Req'd Elap

Job ID Username Queue Jobname SessID NDS TSK Memory Time S Time

------------------ ----------- -------- ---------------- ------ ----- ------ ------ ----- - -----

458842.oak-batch osu0001 serial foobar 26276 1 1 -- 00:02 R --

qstat command is empty, the job is done.$ ls -ltr $ cat foobar.oNNNNNN

Where (NNNNNN is your job id).

- The name you give the job in the script file with the header line #PBS -N foobar

- The job id number assigned to the job.

The name of the script file (tutorial.pbs) has nothing to do with the name of the output file.

Examine the contents of the output file foobar.oNNNNNN carefully. You should be able to see the results of some of the commands you put in tutorial.pbs. It also shows you the values of the variables PBS_NODEFILE, PBS_O_WORKDIR and TMPDIR. These variables exist only while your job is running. Try

$ echo $PBS_O_WORKDIR

and you will see it is no longer defined. $PBS_NODEFILE is a file which contains a list of all the nodes your job is running on. Because this script has the line

#PBS -l nodes=1:ppn=1

the contents of $PBS_NODEFILE is the name of a single compute node.

Notice that $TMPDIR is /tmp/pbstmp.NNNNNN (again, NNNNNN is the id number for this job.) Try

$ ls /tmp/pbstmp.NNNNNN

Why doesn't this directory exist? Because it is a directory on the compute node, not on the login node. Each machine in the cluster has its own /tmp directory and they do not contain the same files and subdirectories. The /users directories are shared by all the nodes (login or compute) but each node has its own /tmp directory (as well as other unshared directories.)

Tar Tutorial

Prerequisites

Step 1 -- Create a directory to work with and download a "tarball"

Start off with the following:

$ mkdir TarTutorial $ cd TarTutorial $ wget http://www.mmm.ucar.edu/wrf/src/WRFDAV3.1.tar.gz $ ls -ltr

The third command will take a while because it is downloading a file from the internet. The file is call a "tarball" or a "gzipped tarball". TAR is an old unix short name for "tape archive" but a tar file is a file that contains a bunch of other files. If you have to move a bunch of files from one place to another, a good way to do it is to pack them into a tar file, move the tar file where you want it then unpack the files at the destination. A tar file usually has the extension ".tar". What about the ".gz"? This means the tar file has been further compressed with the program gzip -- this makes it a lot smaller.

Step 2 -- Unpack the "tarball" and check out the contents

After step 1 your working directory should be ~/TarTutorial and there should be a file called WRFDAV3.1.tar.gz in it.

Now do this:

$ gunzip WRFDAV3.1.tar.gz $ ls -ltr

You should now have a file called WRFDAV3.1.tar which should be quite a bit larger in size than WRFDAV3.1.tar.gz -- this is because it has been uncompressed by the "gunzip" command which is the opposite of the "gzip" command.

Now do this:

$ tar -xvf WRFDAV3.1.tar $ ls -ltr

You should see a lot of filenames go by on the screen and when the first command is done and you issue the ls command you should see two things -- WRFDAV3.1.tar is still there but there is also a directory called WRFDA. You can look at the contents of this directory and navigate around in the directory tree to see what is in there. The options on the "tar" command have the following meanings (you can do a "man tar" to get all the options):

x: extract the contents of the tar file

v: be verbose, i.e. show what is happening on the screen

f: the name of the file which follows the "f" option is the tar file to expand.

Another thing you can do is see how much space is being taken up by the files. Make sure TarTutorial is your working directory then issue the following command:

$ du .

Remember that "." (dot) means the current working directory. The "du" command means "disk usage" -- it shows you how much space is being used by every file and directory in the directory tree. It ends up with the highest level files and directories. You might prefer to do

$ du -h . $ ls -ltrh

Adding the "-h" option to these commands puts the file sizes in human-readable format -- you should get a size of 66M for the tar file -- that's 66 megabytes -- and "du" should print a size of 77M next to ./WRFDA.

Step 3 -- create your own "tarball"

Now, make your own tar file from the WRFDA directory tree:

$ tar -cf mywrf.tar WRFDA $ ls -ltrh

You have created a tar from all the files in the WRFDA directory. The options given to the "tar" command have the following meanings:

c: create a tar file

f: give it the name which follows the "f" option

The files WRFDAV3.1.tar and mywrf.tar are identical. Now compress the tar file you made:

$ gzip mywrf.tar $ ls -ltrh

You should see a file called mywrf.tar.gz which is smaller than WRFDAV3.1.tar.

Step 4 -- Clean up!

You don't want to leave all these files lying around. So delete them

$ rm WRFDAV3.1.tar $ rm mywrf.tar $ rm WRFDA

Oops! You can't remove the directory. You need to use the "rmdir" command:

$ rmdir WRFDA

Oh no! That doesn't work on a directory that's not empty. So are you stuck with all those files? Maybe you can do this:

$ cd WRFDA $ rm * $ cd .. $ rmdir WRFDA

That won't work either because there are some subdirectories in WRFDA and "rm *" won't remove them. Do you have to work your way to the all the leaves at the bottom of the directory tree and remove files then come back up and remove directories? No, there is a simpler way:

$ rm -Rf WRFDA

This will get rid of the entire directory tree. The options have the following meanings:

R: recursively remove all files and directories

f: force; i.e., just remove everything without asking for confirmation

I encourage you to do

$ man rm

and check out all the options. Or some of them -- there are quite a few.

Unix Shortcuts

Description

This tutorial shows you some handy time-saving shortcuts in linux. Once you have a good understanding of how the command line works, you will want to learn how to work more efficiently.

Prerequisites

Linux command line fundamentals.

Goals

- Save you time when working on a linux system

- Increase your appreciation of the power of linux

Step 1 -- The Arrow Keys

Note: even if you know how to use the up arrow in linux, you need to enter the commands in this section because they are used in the following sections. So to begin this tutorial, go to your home directory and create a new directory called ShortCuts:

$ cd $ mkdir Shortcuts $ cd Shortcuts

(If a directory or file named "Shortcuts" already exists, name it something else.)

Imagine typing in a long linux command and making a typo. This is one of the frustrating things about a command line interface -- you have to retype the command, correcting the typo this time. Or what if you have to type several similar commands -- wouldn't it be nice to have a way to recall a previous command, make a few changes, and enter the new command? This is what the up arrow is for.

Try the following:

$ cd .. $ cd ShortCuts (type a capital C)

Linux should tell you there is no directory with that name.

Now type the up arrow key -- the previous command you entered shows up on the command line, and you can use the left arrow to move the cursor just after the capital C, hit Backspace, and type a lower case c. Note you can also position the cursor before the capital C and hit Delete to get rid of it.

Once you have changed the capital C to a lower case c you can hit Return to enter the command -- you do not have to move the cursor to the end of the line.

Now hit the up arrow key a few times, then hit the down arrow key and notice what happens. Play around with this until you get a good feel for what is happening.

Linux maintains a history of commands you have entered. Using the up and down arrow keys, you can recall previously-entered commands to the command line, edit them and re-issue them.

Note that in addition to the left and right arrow keys you can use the Home and End keys to move to the beginning or end of the command line. Also, if you hold down the Ctrl key when you type an arrow key, the cursor will move by an entire word instead of a single character -- this is useful is many situations and works in many editors.

Let's use this to create a directory hierarchy and a few files. Start in the Shortcuts directory and enter the following commands, using the arrow keys to simplify your job:

$ mkdir directory1 $ mkdir directory1/directory2 $ mkdir directory1/directory2/directory3 $ cd directory1/directory2/diectoryr3 (remember the Home key and the Ctrl key with left and right arrows) $ hostname > file1 $ whoami > file2 $ mkdir directory4 $ cal > directory4/file3

Step 2 -- Using the TAB key

Linux has short, cryptic command names to save you typing -- but it is still a command line interface, and that means you interact with the operating system by typing in commands. File names can be long, directory hierarchies can be deep, and this can mean you have to type a lot to specify the file you want or change to current working directory. Not only that, but you have to remember the names of files and directories you type in. The TAB key gives you a way to enter with commands with less typing and less memorization.

Go back to the Shortcuts directory:

$ cd $ cd Shortcuts

Now enter the following:

$ hostname > file1 $ cal > file2 $ whoami > different-file $ date > other-file $ cal > folio5

Now type the following, without hitting the Return key:

$ cat oth <Tab>

What happened? Linux completed the name "other-file" for you! The Tab key is your way of telling Linux to finish the current word you are typing, if possible. Because there is only one file in the directory whose name begins with "oth", when you hit the Tab key Linux is able to complete the name.

Hit Return (if you haven't already) to enter the cat command. Now try

$ cat d <Tab>

As you would expect, Linux completes the name "different-file"

What if you enter

$ cat fi <Tab>

Notice Linux completes as much of the name as possible. You can now enter a "1" or a "2" to finish it off.

But what if you forget what the options are? What if you can't remember if you created "file1" and "file2" or if you created "fileA" and fileB"?

With the comman line showing this:

$ cat file

hit the Tab key twice. Aha! Linux shows you the possible choices for completing the word.

Try

$ cat f <Tab>

The Tab will not add anything -- the command line will still read

$ cat f

Now type the letter o followed by a Tab -- once you add the o there is only one possible completion -- "folio".

Now enter the following:

$ cat directory1/directory2/directory3/directory4/file3

That's kind of a painful to type.

Now type the following without entering Return:

$ ls dir <Tab>

Nice! As you would expect, Linux completes the name of the directory for you. This is because there is only one file in the Shortcuts directory whose name begins with "dir"

Hit Return and Linux will tell you that directory1 contains directory2.

Now type this:

$ ls dir <Tab>

and before you hit return type another d followed by another Tab. Your command line should now look like this:

$ ls directory1/directory2/

If you hit Return, Linux will tell you that directory2 contains directory3.

Now try this:

$ ls dir <Tab>

then type another d followed by <Tab> then another d followed by tab. Don't hit Return yet. Your command line should look like this:

$ ls directory1/directory2/directory3/

Don't hit Return yet. Now type the letter f followed by a Tab. What do you think should happen?

Step 3 -- The Exclamation Point

Hitting the up arrow key is a nice way to recall previously-used commands, but it can get tedious if you are trying to recall a command you entered a while ago -- hitting the same key 30 times is a good way to make yourself feel like an automaton. Fortunately, linux offers a couple of other ways to recall previous commands that can be useful.

Go back to the Shortcuts directory

$ cd ~/Shortcuts

and enter the following:

$ hostname $ cal $ date $ whoami

Now enter this:

$ !c

and hit return.

What happened? Now try

$ !h

and hit return.

The exclamation point ("bang" to Americans, "shriek" to some Englishmen I've worked with) is a way of telling linux you want to recall the last command which matches the text you type after it. So "!c" means recall the last command that starts with the letter c, the "cal" command in this case. You can enter more than one character after the exclamation point in order to distinguish between commands. For example if you enter

$ cd ~/Shortcuts $ cat file1 $ cal $ !c

the last command will redo the "cal" command. But if you enter

$ cat file1 $ cal $ !cat

the last command re-executes the "cat" command.

Step 4 -- Ctrl-r

One problem with using the exclamation point to recall a previous command is that you can feel blind -- you don't get any confirmation about exactly which command you are recalling until it has executed. Sometimes you just aren't sure what you need to type after the exclamation point to get the command you want.

Typing Ctrl-r (that's holding down the Ctrl key and typing a lower case r) is another way to repeat previous commands without having to type the whole command, and it's much more flexible than the bang. The "r" is for "reverse search" and what happens is this. After you type Ctrl-r, start typing the beginning of a previously entered command -- linux will search, in reverse order, for commands that match what you type. To see it in action, type in the following commands (but don't hit <Enter> after the last one):

$ cd ~/Shortcuts $ cat file1 $ cat folio5 $ cal $ Ctrl-r cat

You should see the following on your command line:

(reverse-i-search)`cat': cat folio5

Try playing with this now. Type in " fi" (that's a space, an "f" and an "i") -- did the command shown at the prompt change? Now hit backspace four times.

Now enter a right or left arrow key and you will find yourself editing the matching command. This is one you have to play around with a bit before you understand exactly what it is doing. So go ahead and play with it.

Step 5 -- history

Now type

$ history

and hit return.

Cool, huh? You get to see all the commands you have entered (probably a maximum of 1000.) You can also do something like

$ history | grep cal

to get all the commands with the word "cal" in them. You can use the mouse to cut and paste a previous command, or you can recall it by number with the exclamation point:

$ !874

re-executes the command number 874 in your history.

For more information about what you can do to recall previous commands, check out http://www.thegeekstuff.com/2011/08/bash-history-expansion/

Step 6 -- Ctrl-t

I am just including this because to me it is a fun piece of linux trivia. I don't find it particularly useful. Type

$ cat file1

and hit <Return>. Now hit the up arrow key to recall this command and hist the left arrow key twice so the cursor is on the "e" of "file1". Now hit Ctrl-t (again, hold down the control key and type a lower case t.) What just happened? Try hitting Ctrl-t a couple more times. That's right -- it transposes two characters in the command line -- the one the cursor is on and the one to its left. Also, it moves the cursor to the right. Frankly, it takes me more time to think about what is going to happen if I type Ctrl-t than it takes me to delete some characters and retype them in the correct order. But somewhere out there is a linux black belt who gets extra productivity out of this shortcut.

Step 7 -- The alias command

Another nice feature of linux is the alias command. If there is a command you enter a lot you can define a short name for it. For example, we have been typing "cat folio5" a lot in this tutorial. You must be getting sick of typing "cat folio5". So enter the following:

$ alias cf5='cat folio5'

Now type

$ cf5

and hit return. Nice -- you now have a personal shortcut for "cat folio5". I use this for the ssh commands:

$ alias gogl='ssh -Y jeisenl@pitzer.osc.edu'

I put this in the .bash_aliases file on my laptop so that it is always available to me.

Classroom Project Resource Guide

Request a Classroom Project

Classroom projects will not be billed under the Ohio academic fee structure; all fees will be fully discounted at the time of billing.

Please submit a new project request for a classroom project. You will request a $500 budget. If an additional budget is needed or you want to re-use your project code, you can apply through MyOSC or contact us at OSCHelp. We require a class syllabus; this will be uploaded on the last screen before you submit the request.

During setup, OSC staff test accounts may be added to the project for troubleshooting purposes.

Access

We suggest that students consider connecting to our OnDemand portal to access the HPC resources. All production supercomputing resources can be accessed via that website without having to worry about client configuration. We have a guide for new students to help them figure out the basics of using OSC.

class.osc.eduResources

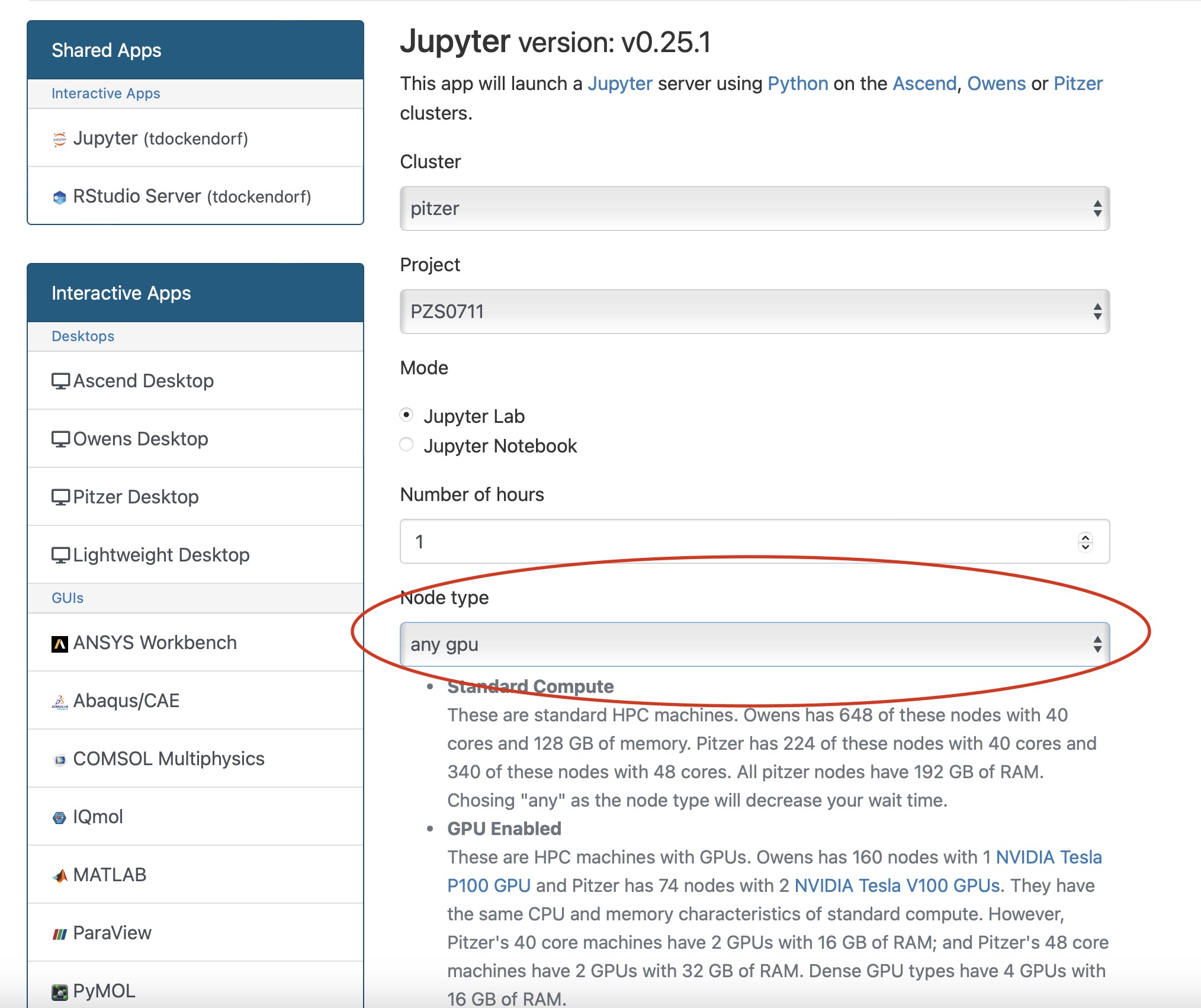

We currently have two production clusters, Pitzer and Owens, with Nvidia GPUs available that may be used for classroom purposes. All systems have "debug" queues that, during typical business hours, allow small jobs of less than one hour to start much quicker than they might otherwise.

If you need to reserve access to particular resources, please contact OSC Help, preferably with at least two weeks lead time, so that we can put in the required reservations to ensure resources are available during lab or class times.

Software

We have a list of supported software, including sample batch scripts, in our documentation. If you have specific needs that we can help with, let OSC Help know.

If you are using Rstudio, please see this webpage.

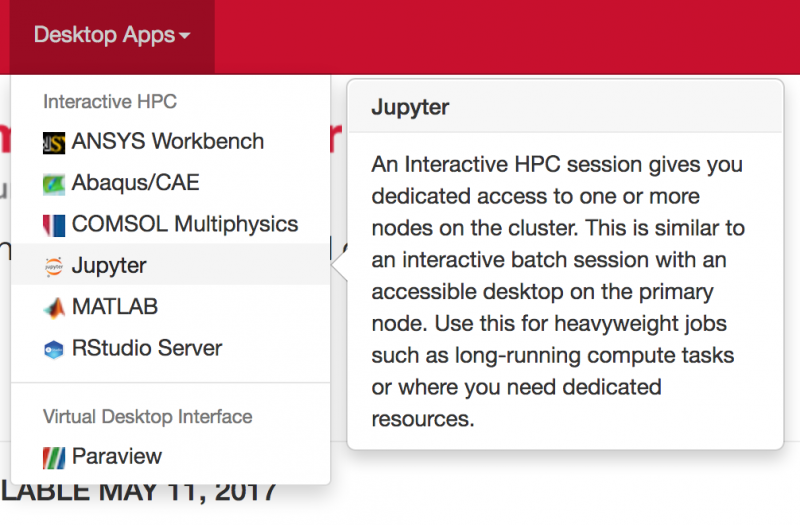

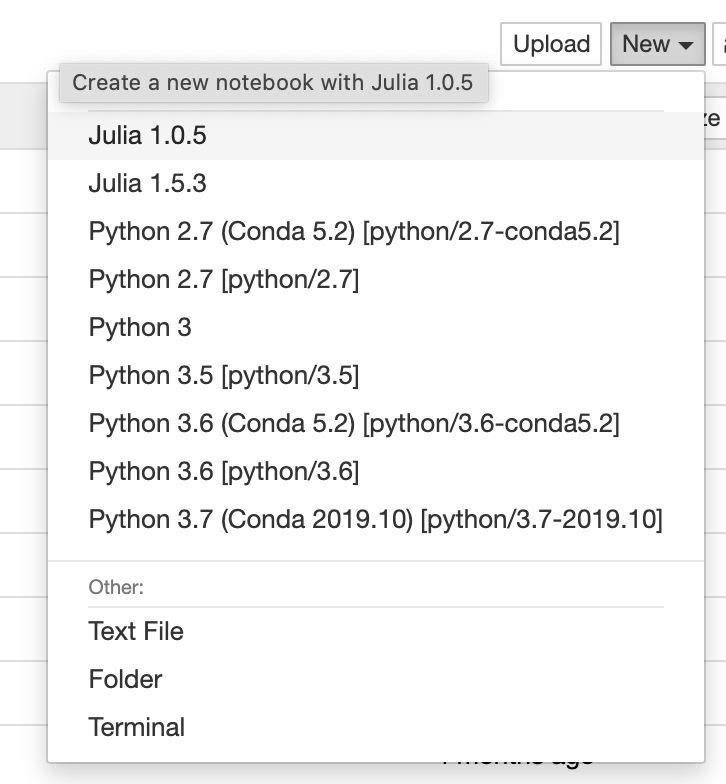

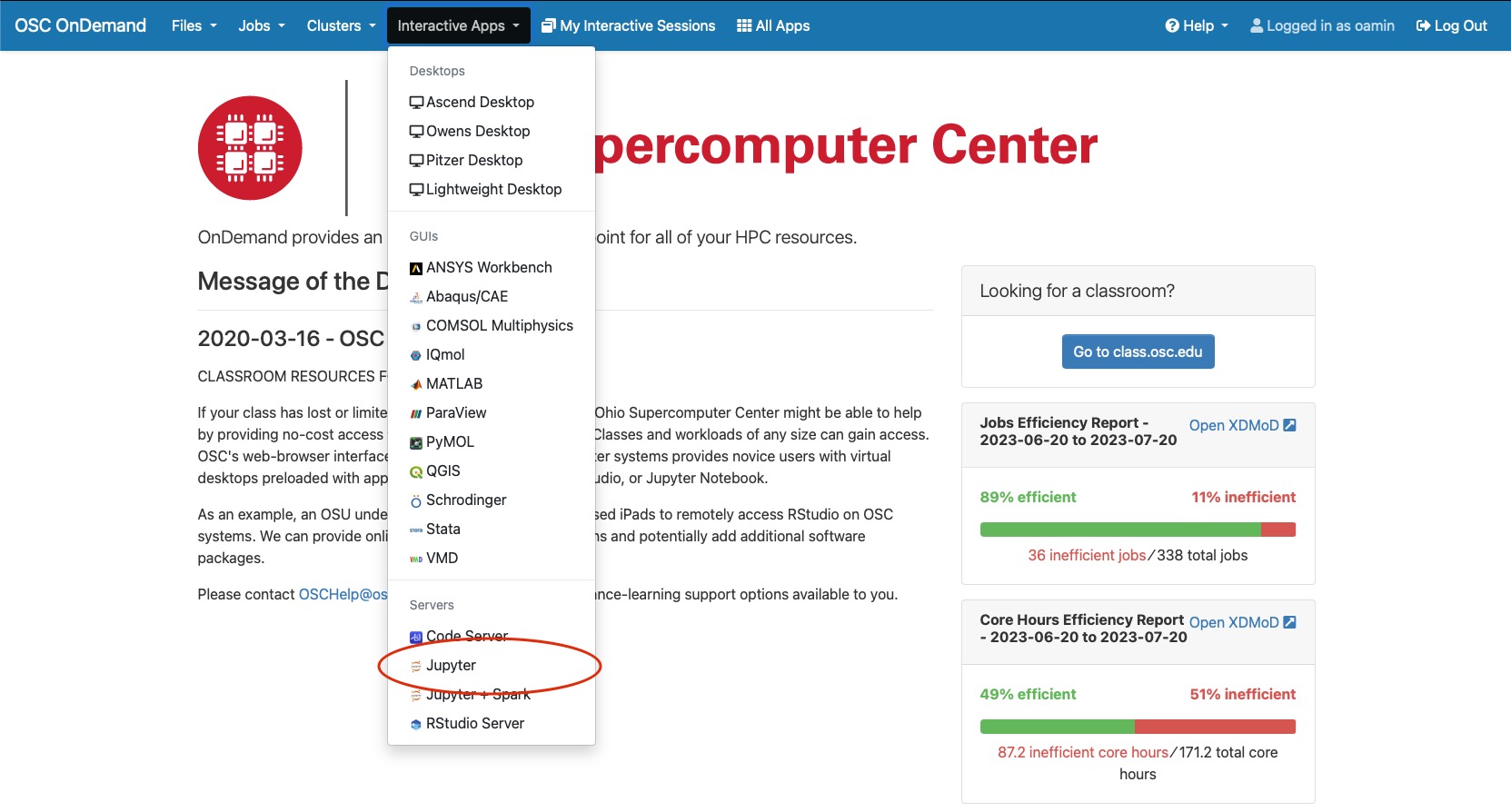

If you are using Jupyter, please see the page Using Jupyter for Classroom.

Account Maintenance

Our classroom project information guide will instruct you on how to get students added to your project using our client portal. For more information, see the documentation. You must also add your username as an authorized user.

Homework Submissions

We can provide you with project space to have students submit assignments through our systems. Please ask about this service and see our how-to. We typically grant 1-5 TB for classroom projects.

Support

Help can be found by contacting OSC Help weekdays, 9 a.m. to 5 p.m. (614-292-1800).

Fill out a request online.

We update our web pages to show relevant events at the center (including training) and system notices on our main page (osc.edu). We also provide important information in the “message of the day” (visible when you log in). You also can receive notices by following @HPCNotices on X.

Helpful Links

FAQ: http://www.osc.edu/supercomputing/faq

Main supercomputing pages: http://www.osc.edu/supercomputing/

Classroom Guide for Students

Join a Classroom Project

Your classroom instructor will provide you with a project and access code that will allow you to join the classroom project. Visit our user management page for more information.

All emails will be sent from "no-reply@osc.edu" - all folders should be checked, including spam/junk. If they did not receive this email, please contact OSC Help.

Review our classroom project info guide for detailed informatoin.

Account Management

You can manage your OSC account via MyOSC, our client porta. This includes:

Access

If your class uses a custom R or Jupyter environment at OSC, please connect to class.osc.edu

If you do not see your class there, we suggest connecting to ondemand.osc.edu.

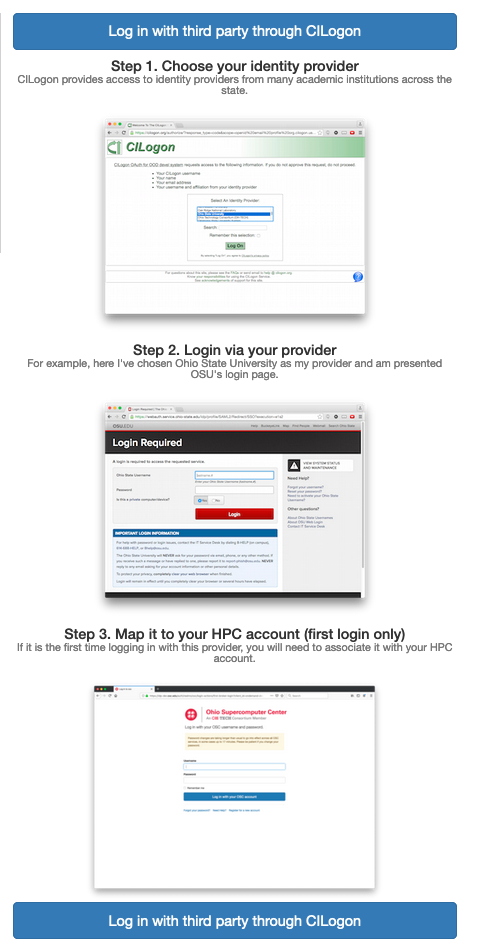

You can log into class.osc.edu or ondemand.osc.edu either using your OSC HPC Credentials or Third-Party Credentials. See this OnDemand page for more information.

File Transfer

There are a few different ways of transferring files between OSC storage and your local computer. We suggest using OnDemand File App if you are new to Linux and looking to transfer smaller-sized files - measured in MB to several hundred MB. For larger files, please use an SFTP client to connect to sftp.osc.edu or Globus.

More Information for New Users

We have a guide for new users to help them figure out the basics of using OSC; included are basics on getting connected, HPC system structure, file transfers, and batch systems.

FAQ: http://www.osc.edu/supercomputing/faq

Main Supercomputing pages: http://www.osc.edu/supercomputing/

Support

Help can be found by contacting OSC Help weekdays, 9AM to 5PM (614-292-1800).

Fill out a request online.

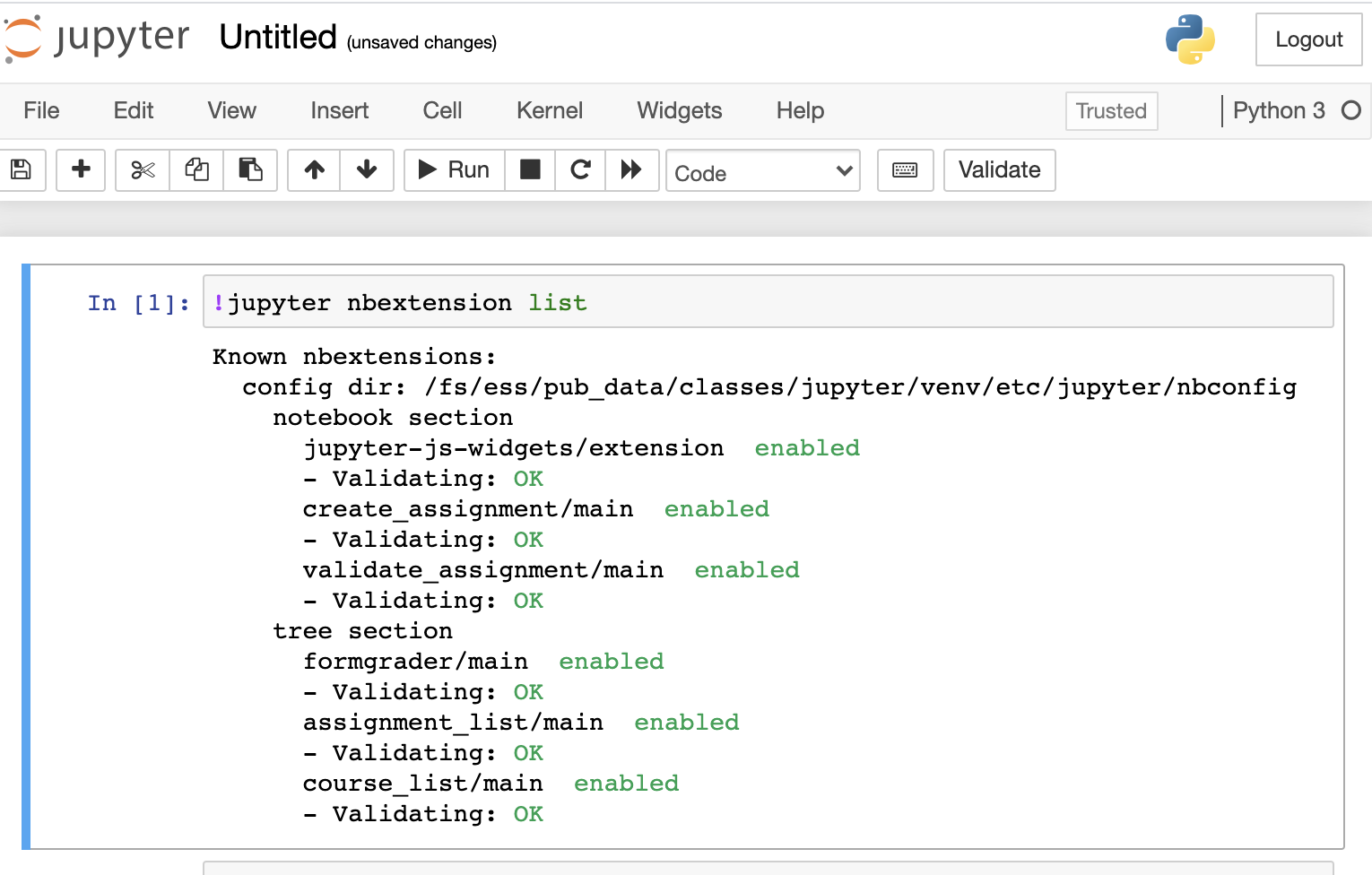

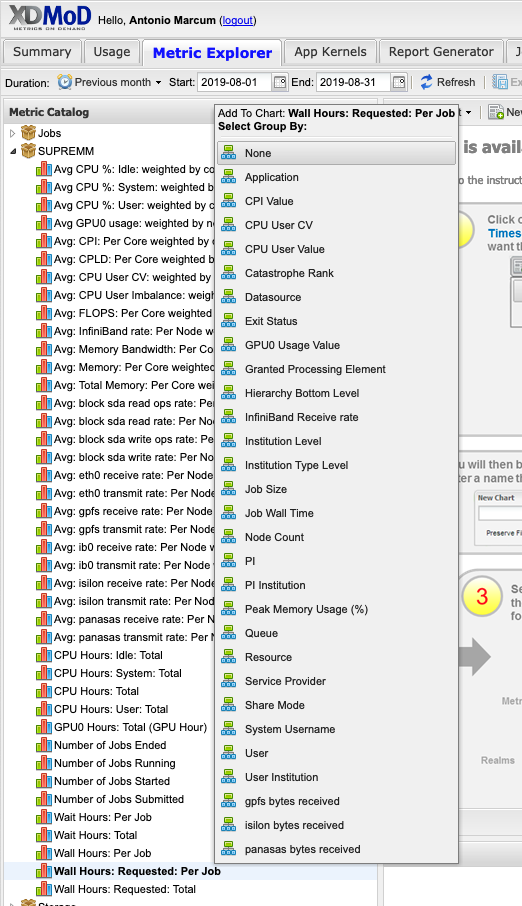

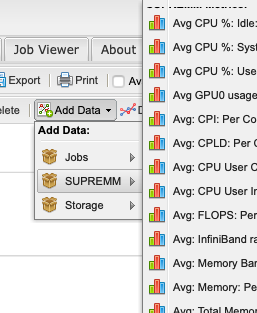

Using Jupyter for Classroom

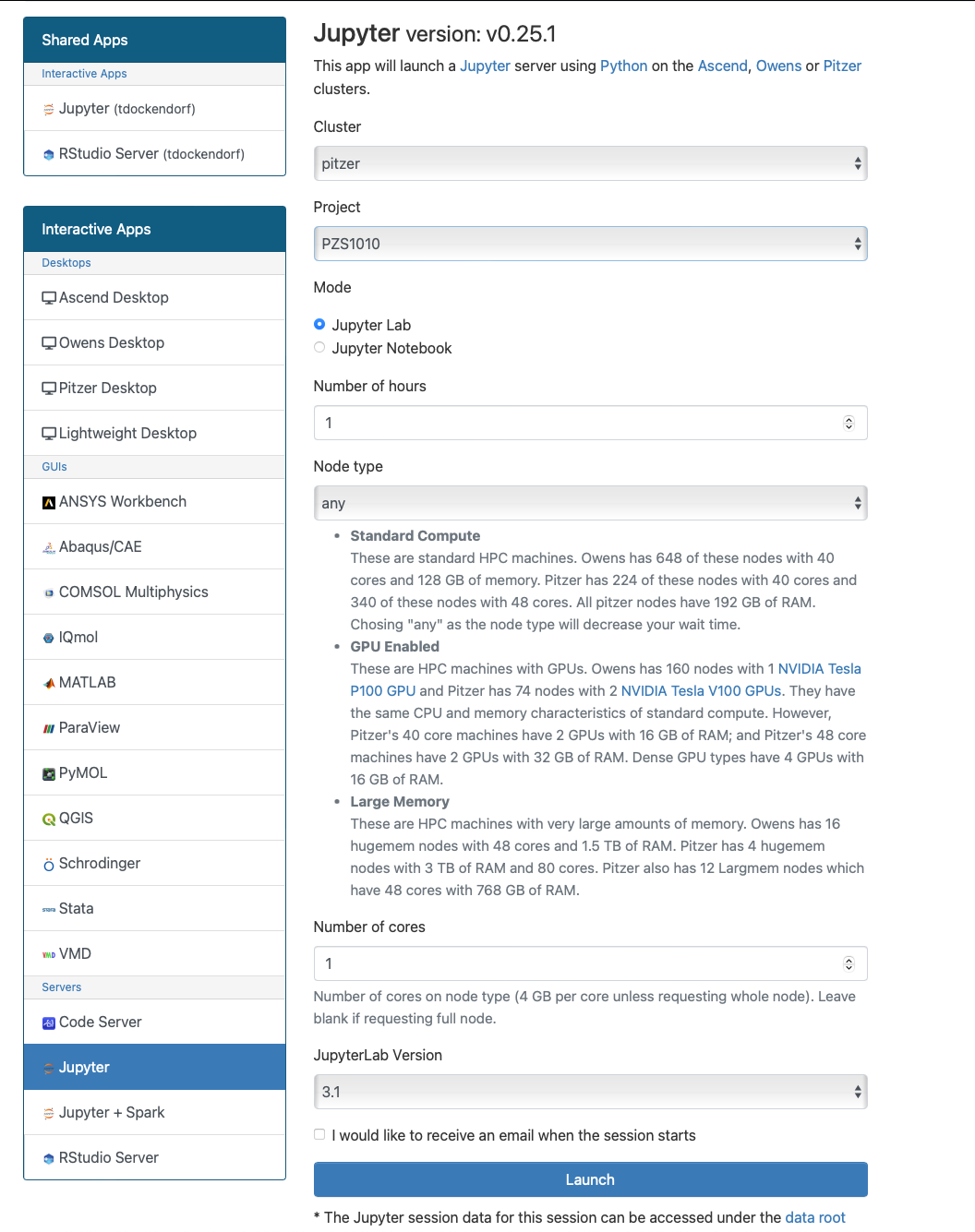

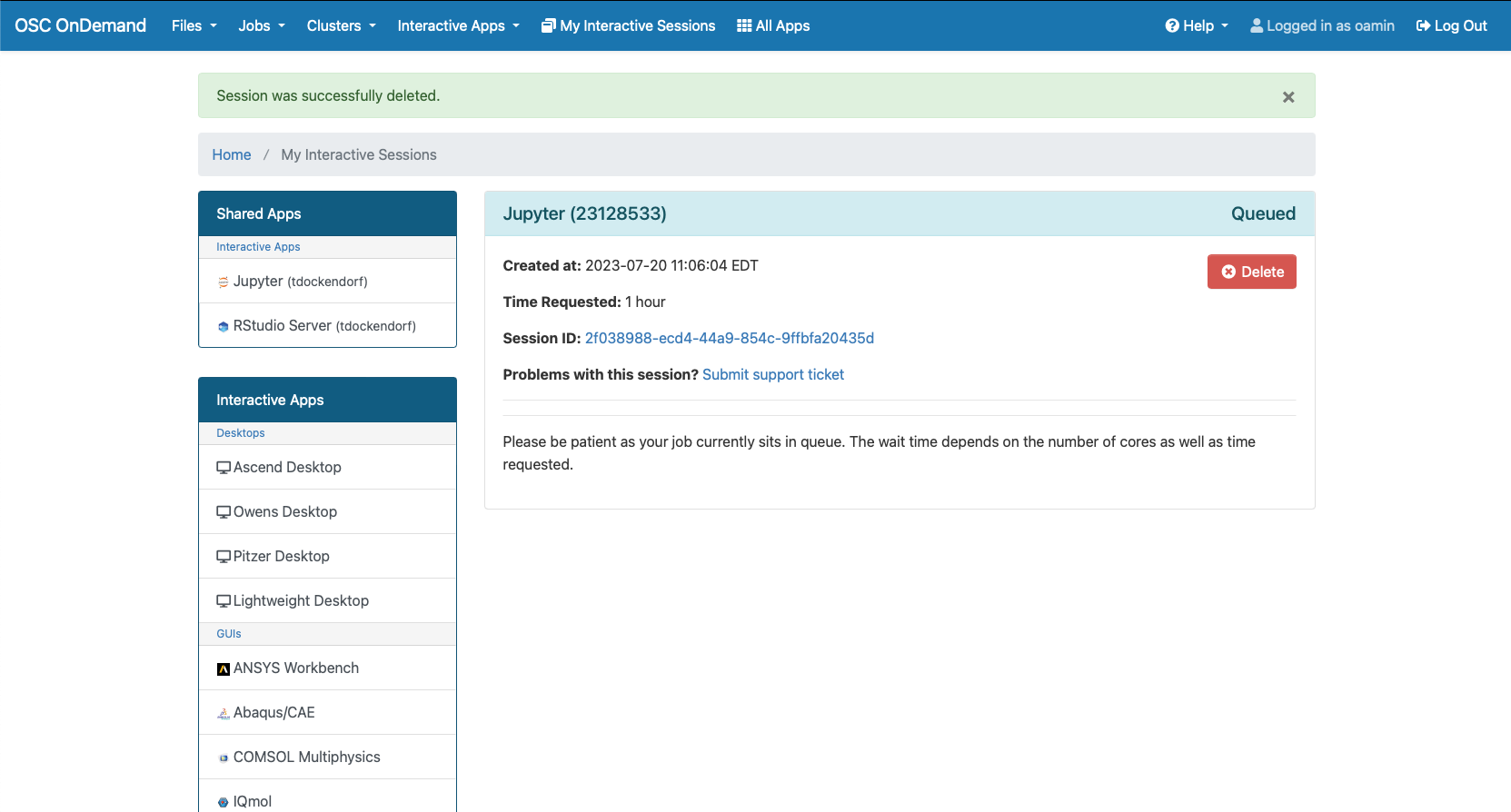

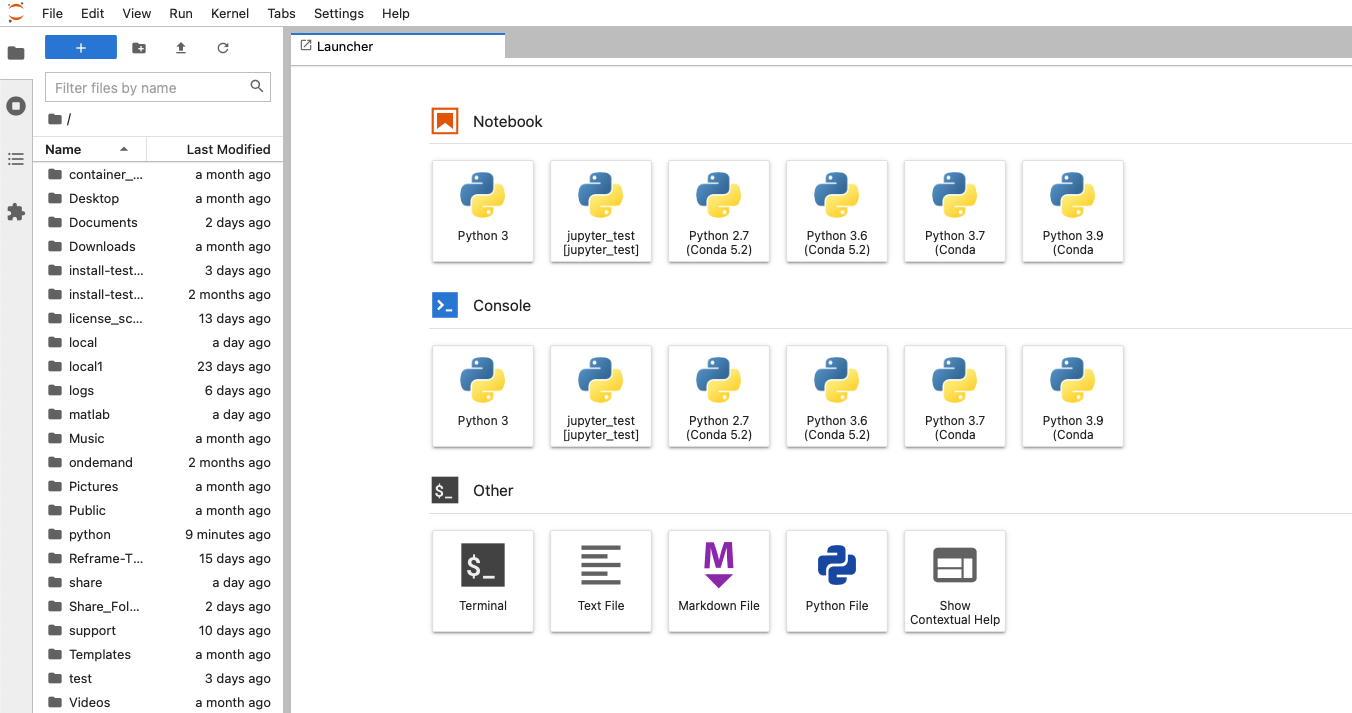

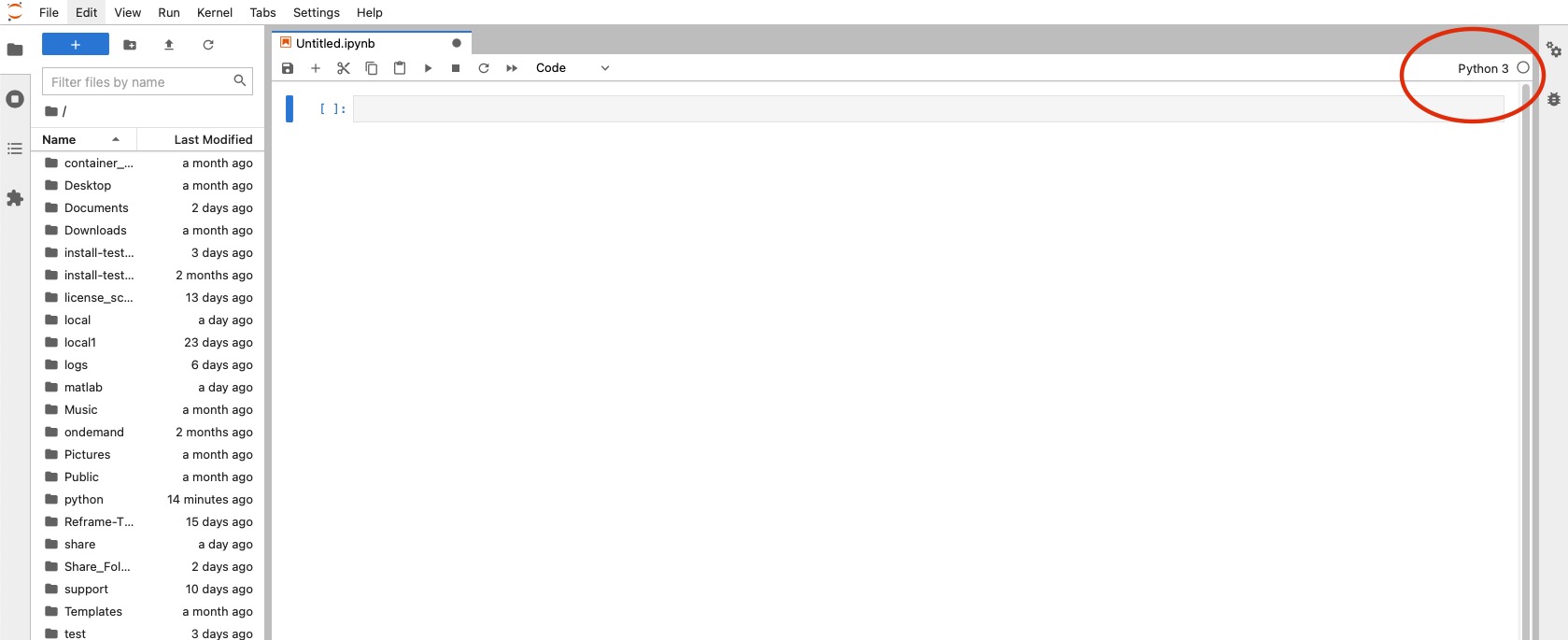

OSC provide an isolated and custom Jupyter environment for each classroom project that requires Jupyter Notebook or JupyterLab.

The instructor must apply for a classroom project that is unique for the course. More details on the classroom project can be found in our classroom project guide. Once we get the information, we will provide you a project ID and a course ID (which is commonly the course ID provided by instructor + school code, e.g. MATH_2530_OU). The instructor can set up a Jupyter environment for the course using the information (see below). The Jupyter environment will be tied to the project ID.

Set up a Jupyter environment

The instructor can set up a Jupyter environment for the course once the project space is initialized:

- Login to Owens or Pitzer as PI account of the classroom project.

- Run setup script with the

project IDandcourse ID:

~support/classroom/tools/setup_jupyter_classroom /fs/ess/project_ID course_ID

If the Jupyter environment is created successfully, please inform us so we can update you when your class is ready at class.osc.edu.

Upgrade a Jupyter environment

You may need to upgrade Jupyter kernels to the latest stable version for a security vulnerability or trying out new features. Please run upgrade script with the project ID and course ID:

~support/classroom/tools/upgrade_jupyter_classroom /fs/ess/project_ID course_ID

Manage the Jupyter environment

Install packages

When your class is ready, launch your class session at class.osc.edu. Then, open a notebook and use the following command to install packages:

pip install --no-cache-dir --ignore-installed [package-name]

Please note that using the --no-cache-dir and --ignore-installed flags can skip using the caches in the home directory, which may cause conflicts when installing classroom packages if you have previously used pip to install packages in multiple Python environments.

Install extensions

Jupyter Notebook

To enable or install nbextension, please use --sys-prefix to install into the classroom Jupyter environment, e.g.

!jupyter contrib nbextension install --sys-prefix

Please do not use --user, which install to your home directory and could mess up the Jupyter environment.

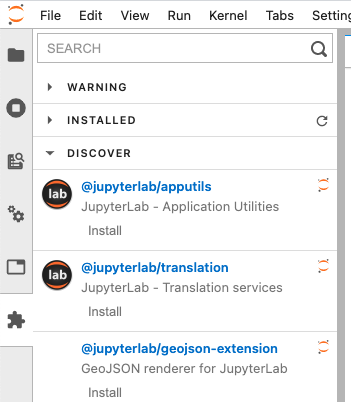

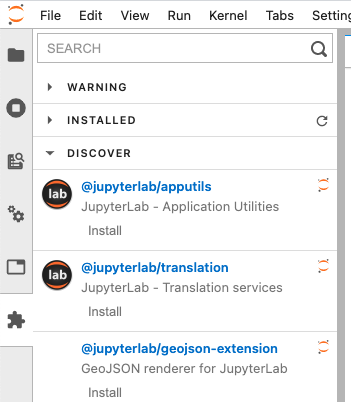

JupyterLab

To install labextension, simply click Extension Manager icon at the side bar

Enable local package access (optional)

By default this Jupyter environment is an isolated Python environment. Anyone launches python from this environment can only access packages installed inside unless PYTHONPATH is used. The instructor can change it by setting include-system-site-packages = true in /fs/ess/project_ID/course_ID/jupyter/pyvenv.cfg. This will allows students to access packages in home directory ~/.local/lib/pythonX.X/site-packages ,and install packages via pip install –user

Workspace

When a class session starts, we create a classroom workspace under the instructor's and students' home space: $HOME/osc_classes/course_ID, and launch Jupyter at the workspace. The root / will appear in the landing page (Files) but everything can be found in $HOME/osc_classes/course_ID on OSC system.

Shared Access

Share class material

The instructor can upload class material to /fs/ess/project_ID/course_ID/materials . When a student launch a Jupyter session, the diretory will be copied to the student's worksapce $HOME/osc_classes/course_ID . The student will see the directory materials on the landing page. PI can add files to the material source directory. New files will be copied to the destination every time when a new Jupyter session starts. But If PI modifies existing files, the changes won't be copied as the files were copied before. Therefore we recommend renaming the file after the update so that it will be copied

Use data dir for large files

For large files, create a data dir to the classroom and place the large files there.

mkdir /fs/ess/project_ID/course_ID/data

Now the large data will not be copied to each user's home dir when they start a classroom job session. Make sure to reference this data properly in notebooks that will be copied to students home dirs from the /materials dir.

Access student workspace

The instructor and TAs can access a student's workspace with limited permissions. First, the instructor sends us a request with the information including the instructor's and TAs' OSC accounts. After a student launches a class session, you can access known files and directories in the student's workspace. For example, you cannot explore the student's workspace

ls /users/PZS1234/student1/osc_classes/course_ID ls: cannot open directory /users/PZS1234/student1/osc_classes/course_ID: Permission denied

but you can access a known file or directory in the workspace

ls /users/PZS1234/student1/osc_classes/course_ID/homework

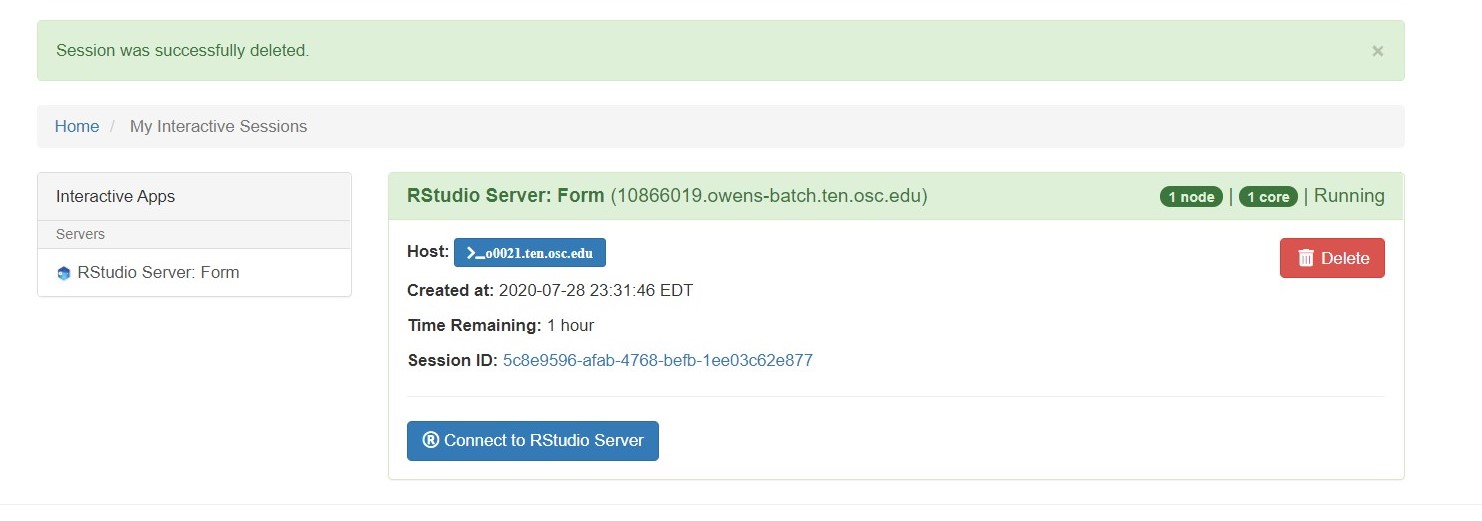

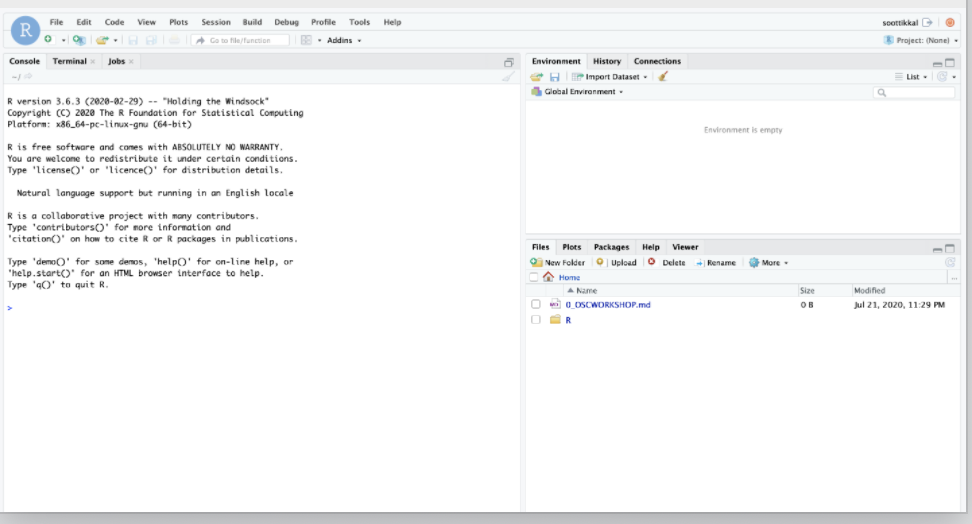

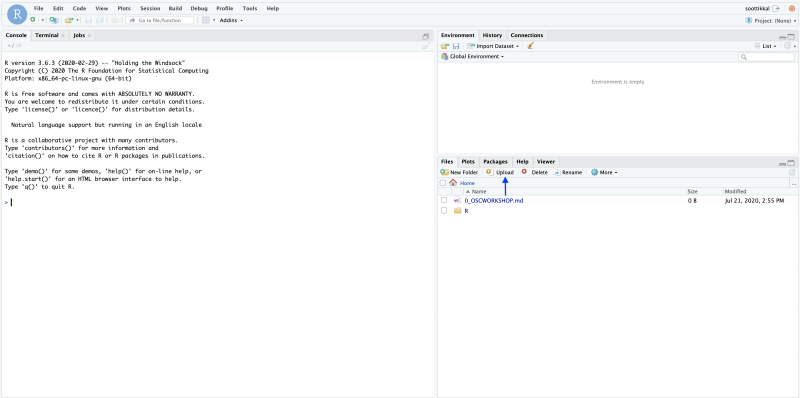

Using Rstudio for classroom

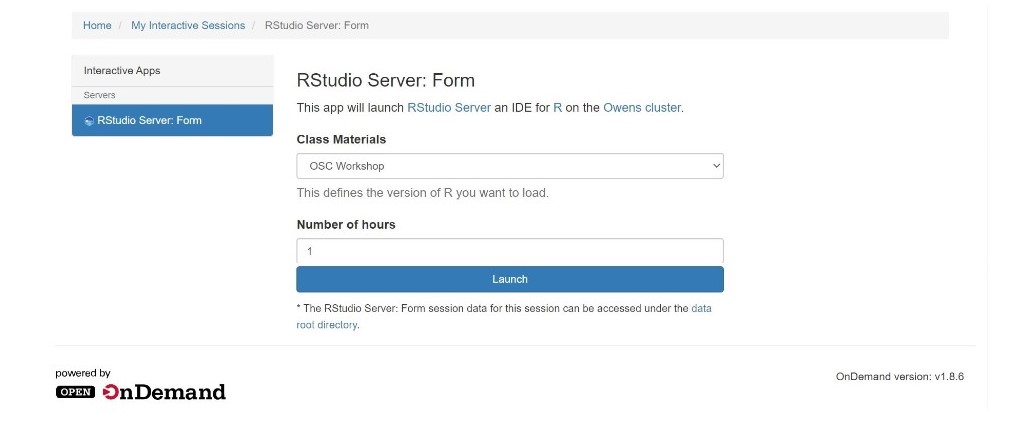

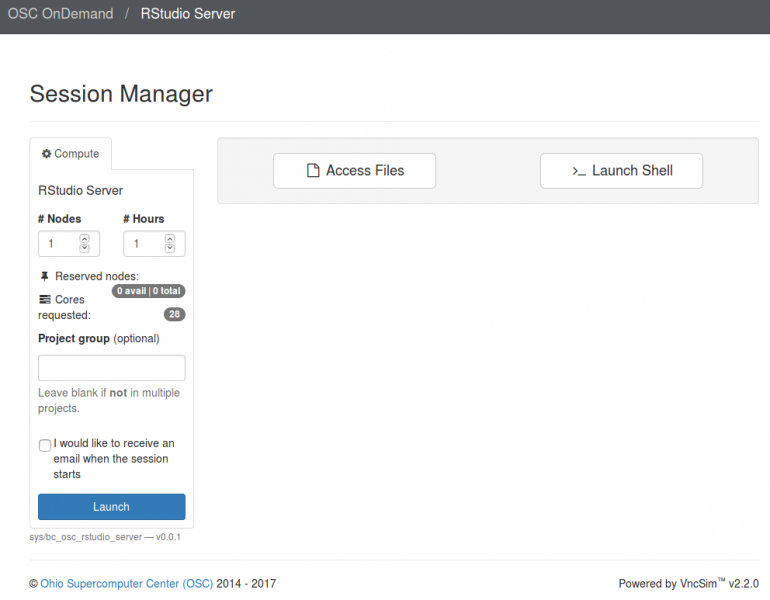

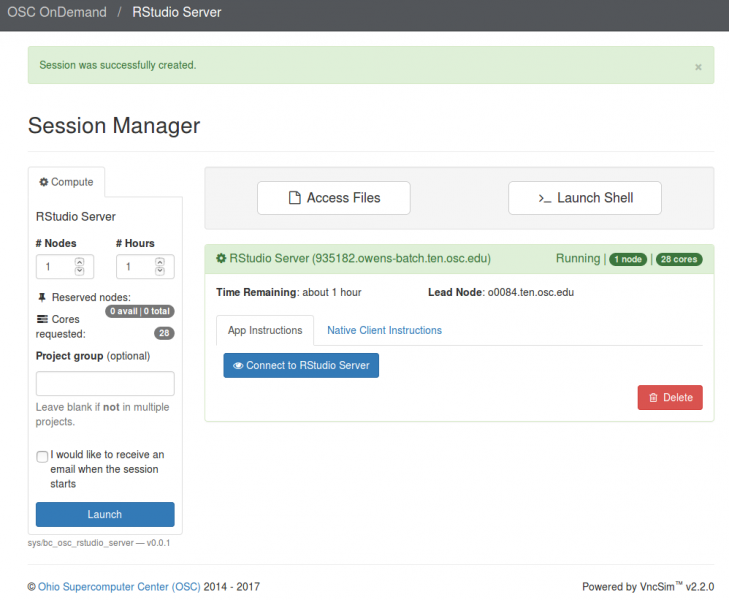

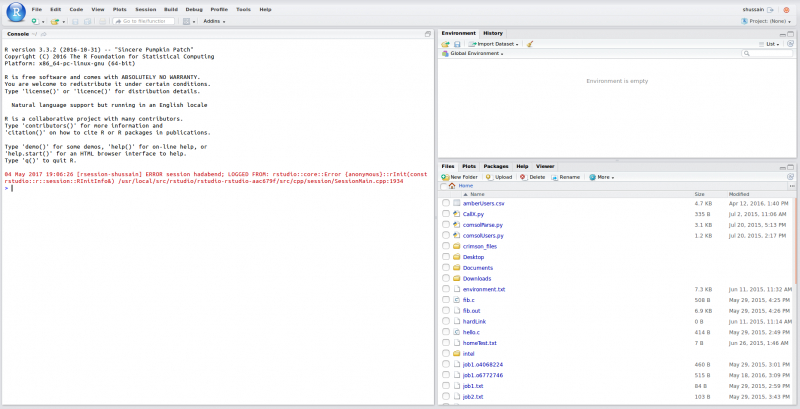

OSC provides an isolated and custom R environment for each classroom project that requires Rstudio. The interface can be accessed at class.osc.edu. Before using this interface, please apply for a classroom project account that is unique for the course. More details on the classroom project can be found here. The custom R environment for the course will be tied to this project ID. Please inform us if you have additional requirements for the class. Once we get the information, we will provide you a course_ID (which is commonly the course ID provided by instructor + school code, e.g. MATH2530_OU)and add your course to the server with the class module created using the course_ID. After login to the class.osc.edu server, you will see several Apps listed. Pick Rstudio server and that will take you to the Rstudio Job submission page. Please pick your course from the drop-down menu under the Class materials and the number of hours needed.